ATI's Late Response to G70 - Radeon X1800, X1600 and X1300

by Derek Wilson on October 5, 2005 11:05 AM EST- Posted in

- GPUs

Test Setup and Power Performance

Our testing methodology was to try and cover a lot of ground with top to bottom hardware. Including the X1300 through the X1800 line required quite a few different cards and tests to be run. In order to make it easier to look at the data, rather than put everything for each game in one place as we normally do, we have broken up our data into three separate groups: Budget, Midrange, and High End.

We used the latest drivers we had available which are both beta drivers. From NVIDIA, the 81.82 drivers were tested rather than the current release as we expect the rel 80 drivers to be in the end users hands before the X1000 series is easy to purchase.

All of our tests were done on this system:

ATI Radeon Express 200 based system

AMD Athlon 64 FX-55

1GB DDR400 2:2:2:8

120 GB Seagate 7200.7 HD

600 W OCZ PowerStreams PSU

The resolutions we tested range from 800x600 on the low end to 2048x1536 on the high end. The games we tested include:

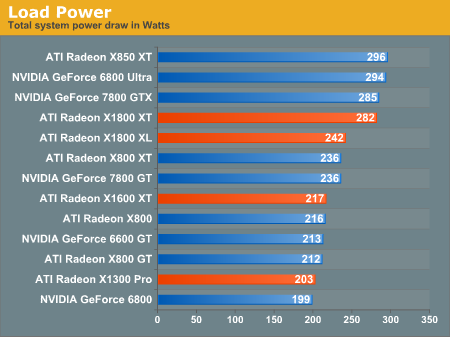

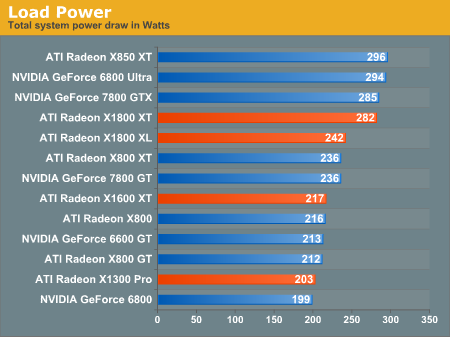

Before we take a look at the performance numbers, here's a look at the power draw of various hardware.

As we can see, this generation draws about as much power as previous generatation products under load at the high end and midrange. The X1300 Pro seems to draw a little more power than we would like to see in a budget part. The card also sports a fan that is just as loud as the X1600 XT. Considering that some of the cards we tested against the X1300 Pro were passively cooled, this is something to note.

Our testing methodology was to try and cover a lot of ground with top to bottom hardware. Including the X1300 through the X1800 line required quite a few different cards and tests to be run. In order to make it easier to look at the data, rather than put everything for each game in one place as we normally do, we have broken up our data into three separate groups: Budget, Midrange, and High End.

We used the latest drivers we had available which are both beta drivers. From NVIDIA, the 81.82 drivers were tested rather than the current release as we expect the rel 80 drivers to be in the end users hands before the X1000 series is easy to purchase.

All of our tests were done on this system:

ATI Radeon Express 200 based system

AMD Athlon 64 FX-55

1GB DDR400 2:2:2:8

120 GB Seagate 7200.7 HD

600 W OCZ PowerStreams PSU

The resolutions we tested range from 800x600 on the low end to 2048x1536 on the high end. The games we tested include:

- Day of Defeat: Source

- Doom 3

- EverQuest 2

- Far Cry

- Splinter Cell: Chaos Theory

- The Chronicles of Riddick

Before we take a look at the performance numbers, here's a look at the power draw of various hardware.

As we can see, this generation draws about as much power as previous generatation products under load at the high end and midrange. The X1300 Pro seems to draw a little more power than we would like to see in a budget part. The card also sports a fan that is just as loud as the X1600 XT. Considering that some of the cards we tested against the X1300 Pro were passively cooled, this is something to note.

103 Comments

View All Comments

Gigahertz19 - Wednesday, October 5, 2005 - link

On the last page I will quote"With its 512MB of onboard RAM, the X1800 XT scales especially well at high resolutions, but we would be very interested in seeing what a 512MB version of the 7800 GTX would be capable of doing."

Based on the results in the benchmarks I would say 512MB barely does anything. Look at the benchmarks on Page 10 the Geforce 7800GTX either beats the X1800 XT or loses by less then 1 FPS. SCALES WELL AT HIGH RESOLUTIONS? Not really, has the author of this article looked at their own benchmarks included? When the resolution is at 2048 x 1536 the 7800GTX creams the competition except in Farcry where it loses by .2FPS to the X1800XT and Splinter Cell it loses by .8FPS so basically it's a tie in those 2 games.

You know why Nvidia does not have a 512MB version because look at the results...it does shit. 512Mb is pointless right now and if you argue you'll use it for the future then will till future games use it and then buy the best GPU then, not now. These new ATI's blow wookies, so much for competition.

NeonFlak - Wednesday, October 5, 2005 - link

"In some cases, the X1800 XL is able to compete with the 7800 GTX, but not enough to warrant pricing on the same level."From the graphs in the review with all the cards present the x1800xl only beat the 7800gt once by 4fps... So beating the 7800gt in one graph by 4fps makes that statement even viable?

FunkmasterT - Wednesday, October 5, 2005 - link

EXACTLY!!ATI's FPS numbers are a major disappointment!

Questar - Wednesday, October 5, 2005 - link

Unless you want image quality.bob661 - Wednesday, October 5, 2005 - link

And the difference is worth the $100 eatra dollars PLUS the "lower" frame rates? Not good bang for the buck.Powermoloch - Wednesday, October 5, 2005 - link

Not the cards....Just the review. Really sad :(yacoub - Wednesday, October 5, 2005 - link

So $450 for the X1800XL versus $250 for the X800XL and the only difference is the new core that maybe provides a handful of additional frames per second, a new AA mode, and shader model 3.0?Sorry, that's not worth $200 to me. Not even close.

coldpower27 - Thursday, October 6, 2005 - link

Perhaps a up to 20% performance improvement, looking at pixel fillrate alone.

Shader Model 3.0 Support.

ATI's Avivo Technology

OpenEXR HDR Support.

HQ Non-Angle Dependent AF User Choice

You decide if that's worth the 200US price difference to you, Adaptive AA, I wouldn't count as apparently through ATI's driver all R3xx hardware and higher now have this capability not just R5xx derivatives, sort of like the launched with R4xx feature Temporal AA.

yacoub - Wednesday, October 5, 2005 - link

So even if these cards were available in stores/online today, the best PCI-E card one can buy for ~$250 is still either an X800XL or a 6800GT. (Or an X800 GTO2 for $230 and flash and overclock it.)I find it disturbing that they even waste the time to develop, let alone release, low-end parts that price-wise can't even compete. Why bother wasting the development and processing to create a card that costs more and performs less? What a joke those two lower-end cards are (x1300 and x1600).

coldpower27 - Thursday, October 6, 2005 - link

The Radeon X1600 XT is intended to replace the older X700 Pro, not the stop gap 6600 GT competitors, X800 GT, X800 GTO, which only came into being because ATI had leftoever supplies of R423/R480 & for X800 GTO only R430 cores and of course due to the fact that X700 Pro wasn't really competitive in performance to 600 GT in the firstp lace, due to ATI's reliance on Low-k technology for their high clock frequencies.I think these are sucessful replacements.

Radeon X850/X800 is replaced by Radeon X1800 Technology.

Radeon X700 is replaced by Radeon X1600 Technology.

Radeon X550/X300 is replaced by Radeon X1300 Technology.

X700 is 156mm2 on 110nm, X1600 is 132mm2 on 90nm

X550 & X1300 are roughly around the same die size, sub 100mm2.

Though the newer cards use more expensive memory types on their high end versions.

They also finally bring ATI's entire family as having the same feature set, something that hasn't been seen ever before by ATI I believe. I mean having a high end, mainstream & budget core based on the same technology.

Nvidia achieved this item first with the Geforce FX line.