Itanium - is there light at the end of the tunnel?

by Johan De Gelas on November 9, 2005 12:05 AM EST- Posted in

- CPUs

EPIC 101

The basics of EPIC (Explicitly Parallel Instruction-set Computing) is a mix of typical RISC and VLIW (very long instruction word) features. From RISC, it copies a relatively straightforward instruction set, a very large register file (128 registers for integer and floating point) and three operand instructions that use registers. Using three operands, two source registers and a destination register (R1 = R2 +R3), instead of two (R2 = R1 + R2), does the calculation job in less instructions and avoids - given enough registers - unnecessary trips to hidden registers or the L1- cache.

Load and Store instruction are used to getting data and instructions from the memory; instructions that actually calculate do not reference memory locations as in x86.

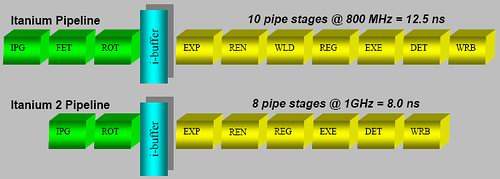

A fixed instruction length makes it much easier to decode, like RISC ISA's, and completely contrary to the x86 instruction set where decoding is a very painful job that requires many pipeline stages. These additional stages are necessary to obtain high clockspeeds, but they make the pipeline unnecessarily long and the branch prediction penalty worse. The Itanium 2 has only an 8-stage pipeline, but is still able to clock up to 1.7 GHz (conservative) using a 130 nm process. Compared to the Xeon MP (130 nm), which clocked up to 3 GHz, it needed a 28-stage pipeline (20 after Trace cache + 8 before) to achieve less than a twice as high a clock speed.

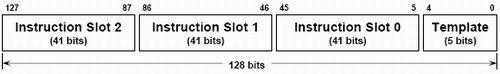

Inside the hardware, the Itanium uses instruction bundles that are 128 bits large. Such a bundle consists of three 41 bit instructions and one 5 bit template. It is this 5 bit template that contains the "compiler grouping" information about the parallelism between the different instructions. Thus, compilers will use this template to tell the CPU what instructions should be issued together. It gets even better; this template also contains an end-of-bundle bit. With this bit, the compiler can indicate whether or not the bundle is finished after the first three instructions or if the CPU should chain two (or even more) bundles together.

Another 6 bits specify the 64 combinations of predication that allow the compiler to eliminate branches, as each instruction can be conditional. So, instead of:

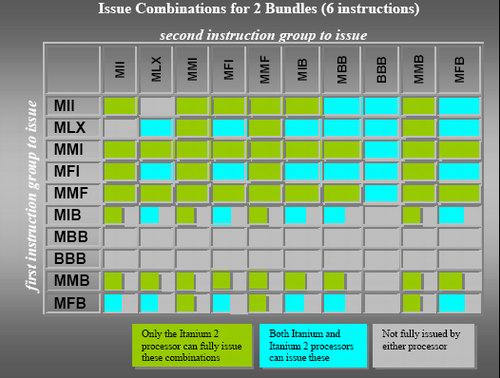

The instruction grouping and elimination of most of the branches opens the way to higher ILP. So, while the Athlon 64 can sustain at most 3 instructions per clock cycle, the Itanium can fetch, decode, issue, execute and retire 2 bundles or 6 instructions per clock cycle.

Contrary to old VLIW designs, the compiler is not obliged to put the instruction in a strict order in a bundle. But there are certain limitations to what kind of instruction mix you can find inside a bundle, as you can see in the table below.

Cache hints, data and instruction pre-fetching and data speculation are a few of the tricks that the Itanium and its compiler can use to keep the caches full with the right instructions and data. Those tricks and the large caches are essential to the Itanium: a L2 cache miss can result in a real stall, as the CPU cannot check dynamically for independent instruction to issue.

In a nutshell, the Itanium has the following advantages:

The basics of EPIC (Explicitly Parallel Instruction-set Computing) is a mix of typical RISC and VLIW (very long instruction word) features. From RISC, it copies a relatively straightforward instruction set, a very large register file (128 registers for integer and floating point) and three operand instructions that use registers. Using three operands, two source registers and a destination register (R1 = R2 +R3), instead of two (R2 = R1 + R2), does the calculation job in less instructions and avoids - given enough registers - unnecessary trips to hidden registers or the L1- cache.

Load and Store instruction are used to getting data and instructions from the memory; instructions that actually calculate do not reference memory locations as in x86.

A fixed instruction length makes it much easier to decode, like RISC ISA's, and completely contrary to the x86 instruction set where decoding is a very painful job that requires many pipeline stages. These additional stages are necessary to obtain high clockspeeds, but they make the pipeline unnecessarily long and the branch prediction penalty worse. The Itanium 2 has only an 8-stage pipeline, but is still able to clock up to 1.7 GHz (conservative) using a 130 nm process. Compared to the Xeon MP (130 nm), which clocked up to 3 GHz, it needed a 28-stage pipeline (20 after Trace cache + 8 before) to achieve less than a twice as high a clock speed.

The short Itanium and Itanium 2 pipeline

Inside the hardware, the Itanium uses instruction bundles that are 128 bits large. Such a bundle consists of three 41 bit instructions and one 5 bit template. It is this 5 bit template that contains the "compiler grouping" information about the parallelism between the different instructions. Thus, compilers will use this template to tell the CPU what instructions should be issued together. It gets even better; this template also contains an end-of-bundle bit. With this bit, the compiler can indicate whether or not the bundle is finished after the first three instructions or if the CPU should chain two (or even more) bundles together.

IA-64 instruction bundle

Another 6 bits specify the 64 combinations of predication that allow the compiler to eliminate branches, as each instruction can be conditional. So, instead of:

Compare R1 to 0 (IF...)You get:

If false jump to Label

R2 =R3 ("Then" instructions)

Label: (Else instructions)

R2 =R1

On the condition that R=0, R2=R3So you eliminate the conditional jump ("If false, jump to") and replace the whole "IF THEN ELSE" clause with an instruction that checks the register and then moves the contents from R3 to R2 in one sweep. Conditional jumps are dependant on the instruction before it and they have to wait until the "Compare R1 to 0" instruction is done. Conditional instructions, however, travel through the pipeline for execution and don't have to wait for anything. You could say that the "IF" part and "Then" part are fused together. For the "else" part, you get:

On the condition that R<>0, R2 = R1Predication makes the code more compact, and eliminates branches and dependencies. Branches can make up 20% of your code, easily. So, with one branch every 5 instructions, it is very hard to issue many instructions in parallel. By converting them into conditional instructions, you eliminate the dependencies and the ILP can get much higher.

The instruction grouping and elimination of most of the branches opens the way to higher ILP. So, while the Athlon 64 can sustain at most 3 instructions per clock cycle, the Itanium can fetch, decode, issue, execute and retire 2 bundles or 6 instructions per clock cycle.

Contrary to old VLIW designs, the compiler is not obliged to put the instruction in a strict order in a bundle. But there are certain limitations to what kind of instruction mix you can find inside a bundle, as you can see in the table below.

Possible bundles

Cache hints, data and instruction pre-fetching and data speculation are a few of the tricks that the Itanium and its compiler can use to keep the caches full with the right instructions and data. Those tricks and the large caches are essential to the Itanium: a L2 cache miss can result in a real stall, as the CPU cannot check dynamically for independent instruction to issue.

In a nutshell, the Itanium has the following advantages:

- Easy decoding leads to a shorter pipeline as less decoding work has to be done, so less stages are necessary;

- In order issue and execution means that dispatch hardware is much simpler, which leads to a shorter pipeline and less transistors;

- Removing conditional jumps and letting the compiler do the scheduling extracts more ILP; and

- 128 registers and the load/store model reduce the number of memory/cache accesses significantly,

- No out-of-order execution makes cache misses and pipelines stalls much more costly; and

- 128 registers and the whole bundle and group system make the instructions on average much longer than x86.

43 Comments

View All Comments

fitten - Thursday, November 10, 2005 - link

I'm guessing they'll write an article on it when it actually exists... it's at least two years out still before they expect to have *real* silicon for it and a lot can change between now and then.fic - Thursday, November 10, 2005 - link

Hmmm, their press release says Q3 '06. I know that dates can and do slip, but I doubt they will slip a year.Besides, most of the Itanic stuff that was talked about in the article isn't shipping and probably never will. How late is the "next" version - 2+ years? - with no real expected ship date in the forseeable future. It would be nice to see an article about the architecture of the chips, decisions made and trade offs for the power efficiency that they are driving toward. Also, this was started a few years ago, what lead them down the power efficiency path before some of the major companies (notably intel) even realized it was an issue.

fitten - Friday, November 11, 2005 - link

From the press release:"It will sample in the third calendar quarter of 2006, with single-core and quad-core versions due in early and late 2007, respectively, and an eight-core version planned for 2008."

Sampling doesn't mean general avialability... not even close. The closest thing they have to availability is "early and late 2007" for availability of single- and quad-core versions.

xelpmoc - Wednesday, November 9, 2005 - link

"TLP, caches and power consumption" is more than three words!Interesting article, though.

Questar - Wednesday, November 9, 2005 - link

Excelent article.I've been telling people for years the Itanium architecture is the future (not the chip). In 20 years there will be no OOE chips on the market, everything will be similar to EPIC. AMD will be there too.

highlandsun - Wednesday, November 9, 2005 - link

I don't see any need for EPIC or VLIW. The Itanium is basically using a 41 bit instruction word. The allocation of bits is only slightly different from the allocation used in a 32 bit RISC instruction. Indeed, point a 128-bit memory channel at a stream of 32 bit instructions and you'll get higher instruction dispatch rates and greater code density. EPIC is philosophically the same as hyperthreading - running multiple instruction streams in parallel in a single CPU core. But that just makes CPU designs unnecessarily complex. With the trend to multi-core CPUs, you get parallelism by using separate cores. Let each core crunch on a single instruction stream at a time, and all of that extra baggage is unnecessary. What is the point of having 11 execution units in a single core if you can only feed it 3 instructions per cycle? An efficient design would keep the number of execution units matched to the number of instructions available, any more is just wasted.Personally I would have invested more effort into scaling speeds on the MIPS design. The Itanium's predicated instructions are cool, but the MIPS architecture has those too. Anything you can do to avoid branching is definitely a win. But if you can pre-fetch 4 32-bit instructions in one cycle and decode and detect branches in advance, that's going to give higher IPC than this VLIW implementation.

Questar - Wednesday, November 9, 2005 - link

You don't know what EPIC is. It's not hyperthreading, and it makes CPU's LESS complex as there is no need for all the hardware needed to support OOE. Cell and Xenos are examples.Think what you want, but the brightest mins in the CPU world are all looking this way.

highlandsun - Wednesday, November 9, 2005 - link

Actually, having handwritten IA64 assembly code I'm acutely aware of what EPIC is and isn't. The point is that it's another lame attempt at increasing parallelism in one core. The problem is that it tries to give the illusion of indepent execution units, just as hyperthreading tries to give the illusion of multiple execution units, and neither implementation is sufficiently flexible. You would get more throughput from truly independent cores, letting the programmer (or some layer above the processor) explicitly allocate instructions to execution units.roymbrown - Thursday, November 10, 2005 - link

"it's another lame attempt at increasing parallelism in one core"It sounds like you are confusing different types of parallelism here. You are referring to TLP (thread level), but EPIC attempts to address ILP (instruction level). Hyperthreading is focused on running multiple independent threads on a single core. Hyperthreading improves TLP, often, at the expense of ILP. EPIC is focused on executing non-dependent instructions within a single thread in parallel. This is more analagous to the work done by complex out-of-order scheduler. EPIC attempts to push this scheduling work onto the compiler.

"You would get more throughput from truly independent cores"

Yes, you would, if you have lots of independent threads. Adding more independent cores improves TLP, but does nothing about ILP.

Thunder 57 - Monday, May 6, 2019 - link

It may not be 20 years later, but OoOE is very much alive and Itanium is dead. We've been hearing for years now that ARM will kill off x86-64. I wonder where we will be in another 20 years.