Ghost Recon Advanced Warfighter Tests

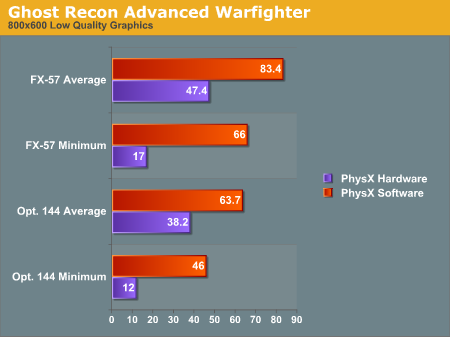

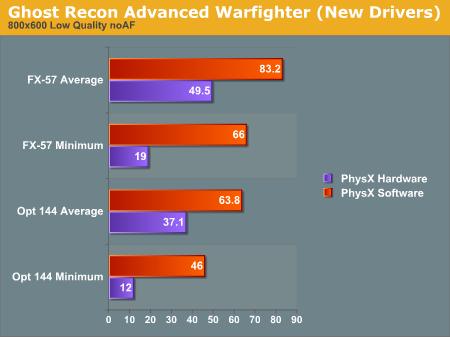

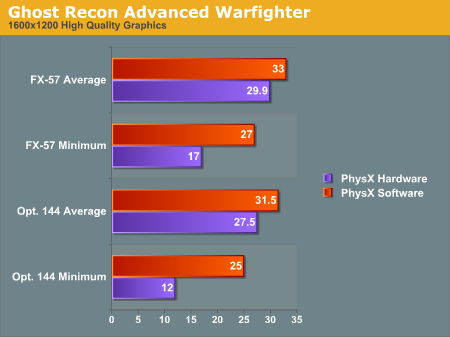

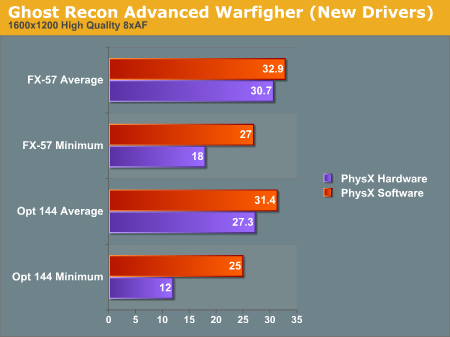

And the short story is that the patch released by AGEIA when we published our previous story didn't really do much to fix the performance issues. We did see an increase in framerate from our previous tests, but the results are less impressive than we were hoping to see (especially with regard to the extremely low minimum framerate).Here are the results from our initial test, as well as the updated results we collected:

There is a difference, but it isn't huge. We are quite impressed with the fact that AGEIA was able to release a driver so quickly after performance issues were made known, but we would like to see better results than this. Perhaps AGEIA will have another trick up their sleeves in the future as well.

Whatever the case, after further testing, it appears our initial assumptions are proving more and more correct, at least with the current generation of PhysX games. There is a bottleneck in the system somewhere near and dear to the PPU. Whether this bottleneck is in the game code, the AGEIA driver, the PCI bus, or on the PhysX card itself, we just can't say at this point. The fact that a driver release did improve the framerates a little implies that at least some of the bottleneck is in the driver. The implementation in GRAW is quite questionable, and a game update could help to improve performance if this is the case.

Our working theory is that there is a good amount of overhead associated with initiating activity on the PhysX hardware. This idea is backed up by a few observations we have made. Firstly, the slow down occurs right as particle systems or objects are created in the game. After the creation of the PhysX accelerated objects, framerates seem to smooth out. The demos we have which use the PhysX hardware for everything physics related don't seem to suffer the same problem when blowing things up (as we will demonstrate shortly).

We don't know enough at this point about either the implementation of the PhysX hardware or the games that use it to be able to say what would help speed things up. It is quite clear that there is a whole lot of breathing room for developers to use. Both the CellFactor demo (now downloadable) and the UnrealEngine 3 demo Hangar of Doom show this fact quite clearly.

67 Comments

View All Comments

phusg - Wednesday, May 17, 2006 - link

> Performance issues must not exist, as stuttering framerates have nothing to do with why people spend thousands of dollars on a gaming rig.What does this sentence mean? No, really. It seems to try to say more than just, "stuttering framerates on a multi-thousand dollar rig is ridiculous", or is that it?

nullpointerus - Wednesday, May 17, 2006 - link

I believe he means that the card can't survive in the market if it dramatically lowers framerates on even high end rigs.DerekWilson - Wednesday, May 17, 2006 - link

check plus ... sorry if my wording was a little cumbersome.QChronoD - Wednesday, May 17, 2006 - link

It seems to me like you guys forgot to set a baseline for the system with the PPU card installed. From the picture that you posted in the CoV test, the nuber of physics objects looks like it can be adjusted when the AGIEA support is enabled. You should have ran a benchmark with the card installed but keeping the level of physics the same. That would eliminate the loading on the GPU as a variable. Doing so would cause the GPU load to remain nearly the same with the only difference being to do the CPU and PPU taking time sending info back and forth.Brunnis - Wednesday, May 17, 2006 - link

I bet a game like GRAW actually would run faster if the same physics effects were run directly on the CPU instead of this "decelerator". You could add a lot of physics before the game would start running nearly as bad as with the PhysX card. What a great product...DigitalFreak - Wednesday, May 17, 2006 - link

I'm wondering the same thing."We still need hard and fast ways to properly compare the same physics algorithm running on a CPU, a GPU, and a PPU -- or at the very least, on a (dual/multi-core) CPU and PPU."

Maybe it's a requirement that the developers have to intentionally limit (via the sliders, etc.) how many "objects" can be generated without the PPU in order to keep people from finding out that a dual core CPU could provide the same effects more efficiently than their PPU.

nullpointerus - Wednesday, May 17, 2006 - link

Why would ASUS or BFG want to get mixed up in a performance scam?DerekWilson - Wednesday, May 17, 2006 - link

Or EPIC with UnrealEngine 3?Makes you wonder what we aren't seeing here doesn't it?

Visual - Wednesday, May 17, 2006 - link

so what you're showing in all the graphs is lower performance with the hardware than without it. WTF?yes i understand that testing without the hardware is only faster because it's running lower detail, but that's not clearly visible from a few glances over the article... and you do know how important the first impression really is.

now i just gotta ask, why can't you test both software and hardware with the same level of detail? that's what a real benchmark should show atleast. Can't you request some complete software emulation from AGEIA that can fool the game that the card is present, and turn on all the extra effects? If not from AGEIA, maybe from ATI or nVidia, who seem to have worked on such emulations that even use their GFX cards. In the worst case, if you can't get the software mode to have all the same effects, why not then atleast turn off those effects when testing the hardware implementation? In the city of villians for example, why is the software test ran with lower "Max Physics Debris Count"? (though I assume there are other effects that get automatically enabled with the hardware present and aren't configurable)

I just don't get the point of this article... if you're not able to compare apples to apples yet, then don't even bother with an article.

Griswold - Wednesday, May 17, 2006 - link

I think they clearly stated in the first article, that GRAW for example, doesnt allow higher debris settings in software mode.But even if it did, a $300 part that is supposed to be lightning fast and what not, should be at least as fast as ordinary software calculations - at higher debris count.

I really dont care much about apples and oranges here. The message seems to be clear, right now it isnt performing up to snuff for whatever reason.