NVIDIA GeForce 8800 GT: The Only Card That Matters

by Derek Wilson on October 29, 2007 9:00 AM EST- Posted in

- GPUs

The Card

The GeForce 8800 GT, whose heart is a G92 GPU, is quite a sleek card. The heatsink shroud covers the entire length of the card so that no capacitors are exposed. The card's thermal envelop is low enough, thanks to the 65nm G92, to require only a single slot cooling solution. Here's a look at the card itself:

The card makes use of two dual-link DVI outputs and a third output for analog HD and other applications. We see a single SLI connector on top of the card, and a single 6-pin PCIe power connector on the back of the card. NVIDIA reports the maximum dissipated power as 105W, which falls within the 150W power envelope provided by the combination of one PCIe power connector and the PCIe x16 slot itself.

The fact that this thing is 65nm has given rise to at least one vendor attempting to build an 8800 GT with a passive cooler. While the 8800 GT does use less power than other cards in its class, we will have to wait and see if passive cooling will remain stable even through the most rigorous tests we can put it through.

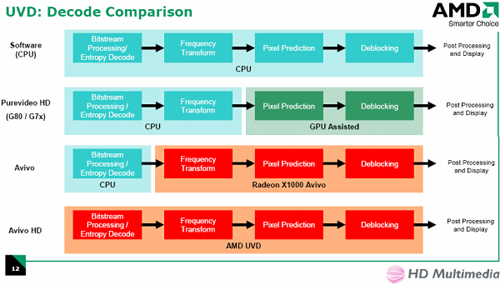

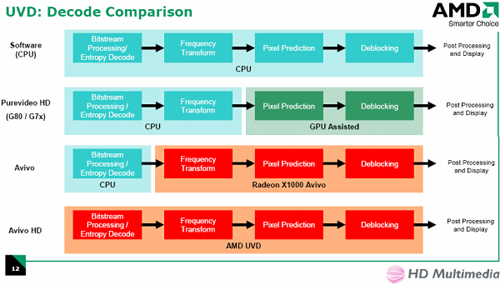

Earlier this summer we reviewed NVIDIA's VP2 hardware in the form of the 8600 GTS. The 8800 GTX and GTS both lacked the faster video decode hardware of the lower end 8 Series hardware, but the 8800 GT changes all that. We now have a very fast GPU that includes full H.246 offload capability. Most of the VC-1 pipeline is also offloaded by the GPU, but the entropy encoding used in VC-1 is not hardware accelerated by NVIDIA hardware. This is less important in VC-1, as the decode process is much less strenuous. To recap the pipeline, here is a comparison of different video decode hardware:

NVIDIA's VP2 hardware matches the bottom line for H.264, and the line above for VC-1 and MPEG-2. This includes the 8800 GT.

We aren't including any new tests here, as we can expect performance on the same level as the 8600 GTS. This means a score of 100 under HD HQV, and very low CPU utilization even on lower end dual core processors.

Let's take a look at how this card stacks up against the rest of the lineup:

On paper, the 8800 GT completely gets rid of the point of the 8800 GTS. The 8800 GT has more shader processing power, can address and filter more textures per clock, and only falls short in the number of pixels it can write out to memory per clock and overall memory bandwidth. Even then, the memory bandwidth advantage of the 8800 GTS isn't that great (64GB/s vs. 57.6GB/s), amounting to only 11% thanks to the 8800 GT's slightly higher memory clock. If the 8800 GT does end up performing the same, if not better, than the 8800 GTS then NVIDIA will have truly thrown down an amazing hand.

You see, the GeForce 8800 GTS 640MB was an incredible performer upon its release, but it was still priced too high for the mainstream. NVIDIA turned up the heat with a 320MB version, which you'll remember performed virtually identically to the 640MB while bringing the price down to $300. With the 320MB GTS, NVIDIA gave us the performance of its $400 card for $300, and now with the 8800 GT, NVIDIA looks like it's going to give us that same performance for $200. And all this without a significant threat from AMD.

Before we get too far ahead of ourselves, we'll need to see how the 8800 GT and 8800 GTS 320MB really do stack up. On paper the decision is clear, but we need some numbers to be sure. And we can't get to the numbers until we cover a couple more bases The only other physical point of interest about the 8800 GT is the fact that it takes advantage of the PCIe 2.0 specification. Let's take a look at what that really means right now.

The GeForce 8800 GT, whose heart is a G92 GPU, is quite a sleek card. The heatsink shroud covers the entire length of the card so that no capacitors are exposed. The card's thermal envelop is low enough, thanks to the 65nm G92, to require only a single slot cooling solution. Here's a look at the card itself:

The card makes use of two dual-link DVI outputs and a third output for analog HD and other applications. We see a single SLI connector on top of the card, and a single 6-pin PCIe power connector on the back of the card. NVIDIA reports the maximum dissipated power as 105W, which falls within the 150W power envelope provided by the combination of one PCIe power connector and the PCIe x16 slot itself.

The fact that this thing is 65nm has given rise to at least one vendor attempting to build an 8800 GT with a passive cooler. While the 8800 GT does use less power than other cards in its class, we will have to wait and see if passive cooling will remain stable even through the most rigorous tests we can put it through.

Earlier this summer we reviewed NVIDIA's VP2 hardware in the form of the 8600 GTS. The 8800 GTX and GTS both lacked the faster video decode hardware of the lower end 8 Series hardware, but the 8800 GT changes all that. We now have a very fast GPU that includes full H.246 offload capability. Most of the VC-1 pipeline is also offloaded by the GPU, but the entropy encoding used in VC-1 is not hardware accelerated by NVIDIA hardware. This is less important in VC-1, as the decode process is much less strenuous. To recap the pipeline, here is a comparison of different video decode hardware:

NVIDIA's VP2 hardware matches the bottom line for H.264, and the line above for VC-1 and MPEG-2. This includes the 8800 GT.

We aren't including any new tests here, as we can expect performance on the same level as the 8600 GTS. This means a score of 100 under HD HQV, and very low CPU utilization even on lower end dual core processors.

Let's take a look at how this card stacks up against the rest of the lineup:

| Form Factor | 8800 GTX | 8800 GTS | 8800 GT | 8600 GTS |

| Stream Processors | 128 | 96 | 112 | 32 |

| Texture Address / Filtering | 32 / 64 | 24 / 48 | 56 / 56 | 16 / 16 |

| ROPs | 24 | 20 | 16 | 8 |

| Core Clock | 575MHz | 500MHz | 600MHz | 675MHz |

| Shader Clock | 1.35GHz | 1.2GHz | 1.5GHz | 1.45GHz |

| Memory Clock | 1.8GHz | 1.6GHz | 1.8GHz | 2.0GHz |

| Memory Bus Width | 384-bit | 320-bit | 256-bit | 128-bit |

| Frame Buffer | 768MB | 640MB / 320MB | 512MB / 256MB | 256MB |

| Transistor Count | 681M | 681M | 754M | 289M |

| Manufacturing Process | TSMC 90nm | TSMC 90nm | TSMC 65nm | TSMC 80nm |

| Price Point | $500 - $600 | $270 - $450 | $199 - $249 | $140 - $199 |

On paper, the 8800 GT completely gets rid of the point of the 8800 GTS. The 8800 GT has more shader processing power, can address and filter more textures per clock, and only falls short in the number of pixels it can write out to memory per clock and overall memory bandwidth. Even then, the memory bandwidth advantage of the 8800 GTS isn't that great (64GB/s vs. 57.6GB/s), amounting to only 11% thanks to the 8800 GT's slightly higher memory clock. If the 8800 GT does end up performing the same, if not better, than the 8800 GTS then NVIDIA will have truly thrown down an amazing hand.

You see, the GeForce 8800 GTS 640MB was an incredible performer upon its release, but it was still priced too high for the mainstream. NVIDIA turned up the heat with a 320MB version, which you'll remember performed virtually identically to the 640MB while bringing the price down to $300. With the 320MB GTS, NVIDIA gave us the performance of its $400 card for $300, and now with the 8800 GT, NVIDIA looks like it's going to give us that same performance for $200. And all this without a significant threat from AMD.

Before we get too far ahead of ourselves, we'll need to see how the 8800 GT and 8800 GTS 320MB really do stack up. On paper the decision is clear, but we need some numbers to be sure. And we can't get to the numbers until we cover a couple more bases The only other physical point of interest about the 8800 GT is the fact that it takes advantage of the PCIe 2.0 specification. Let's take a look at what that really means right now.

90 Comments

View All Comments

DukeN - Monday, October 29, 2007 - link

This is unreal price to performance - knock on wood; play oblivion at 1920X1200 on a $250 GPU.Could we have a benchmark based on the Crysis demo please, how one or two cards would do?

Also, the power page pics do not show up for some reason (may be the firewall cached it incorrectly here at work).

Thank you.

Xtasy26 - Monday, October 29, 2007 - link

Hey Guys,If you want to see Crysis benchmarks, check out this link:

http://www.theinquirer.net/gb/inquirer/news/2007/1...">http://www.theinquirer.net/gb/inquirer/.../2007/10...

The benches are:

1280 x 1024 : ~ 37 f.p.s.

1680 x 1050 : 25 f.p.s.

1920 x 1080 : ~ 21 f.p.s.

This is on a test bed:

Intel Core 2 Extreme QX6800 @2.93 GHz

Asetek VapoChill Micro cooler

EVGA 680i motherboard

2GB Corsair Dominator PC2-9136C5D

Nvidia GeForce 8800GT 512MB/Zotac 8800GTX AMP!/XFX 8800Ultra/ATI Radeon HD2900XT

250GB Seagate Barracuda 7200.10 16MB cache

Sony BWU-100A Blu-ray burner

Hiper 880W Type-R Power Supply

Toshiba's external HD-DVD box (Xbox 360 HD-DVD drive)

Dell 2407WFP-HC

Logitech G15 Keyboard, MX-518 rat

Xtasy26 - Monday, October 29, 2007 - link

This game seems real demanding. If it is getting 37 f.p.s. at 1280 x 1024, imagine what the frame rate will be with 4X FSAA enabled combined with 8X Anistrophic Filtering. I think I will wait till Nvidia releases there 9800/9600 GT/GTS and combine that with Intel's 45nm Penryn CPU. I want to play this beautiful game in all it's glory!:)Spuke - Monday, October 29, 2007 - link

Impressive!!!! I read the article but I saw no mention of a release date. When's this thing available?Spuke - Monday, October 29, 2007 - link

Ummm.....When can I BUY it? That's what I mean.EODetroit - Monday, October 29, 2007 - link

Now.http://www.newegg.com/Product/ProductList.aspx?Sub...">http://www.newegg.com/Product/ProductLi...18+10696...

poohbear - Wednesday, October 31, 2007 - link

when do u guys think its gonna be $250? cheapest i see is $270, but i understand when its first released the prices are jacked up a bit.EateryOfPiza - Monday, October 29, 2007 - link

I second the request for Crysis benchmarks, that is the game that taxes everything at the moment.DerekWilson - Monday, October 29, 2007 - link

we actually tested crysis ...but there were issues ... not with the game, we just shot ourselves in the foot on this one and weren't able to do as much as we wanted. We had to retest a bunch of stuff, and we didn't get to crysis.

yyrkoon - Monday, October 29, 2007 - link

Yes, I am glad instead of purchasing a video card, I instead changed motherboard/CPU for Intel vs AMD. I still like my AM2 Opteron system a lot, but performance numbers, and the effortless 1Ghz OC on the ABIT IP35-E/(at $90usd !) was just too much to overlook.I can definitely understand your 'praise' as it were when nVidia is now lowering their prices, but this is where these prices should have always been. nVidia, and ATI/AMD have been ripping us, the consumer off for the last 1.5 years or so, so you will excuse me if I do not show too much enthusiasm when they finally lower their prices to where they should be. I do not consider this to be much different than the memory industry over charging, and the consumer getting the shaft(as per your article).

I am happy though . . .