Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

Drilling Deeper and Making the AMD/NVIDIA Comparison

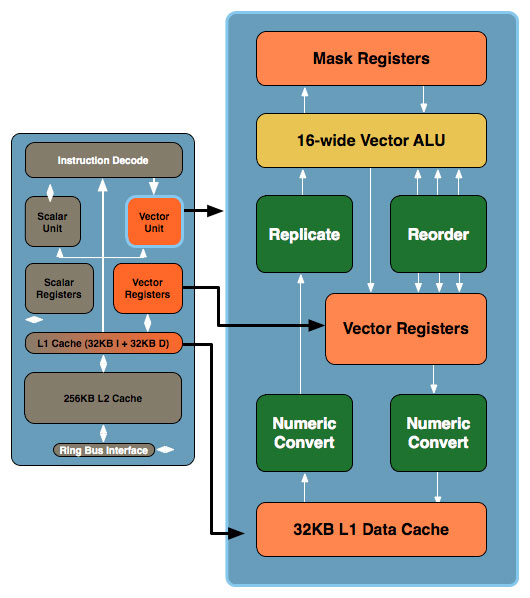

Don't be fooled by the initial diagram, this simple x86 core gets far more complex. In the image below, the block to the left is the Larrabee core we mentioned earlier, to the right we've blown up the vector unit and its associated parts:

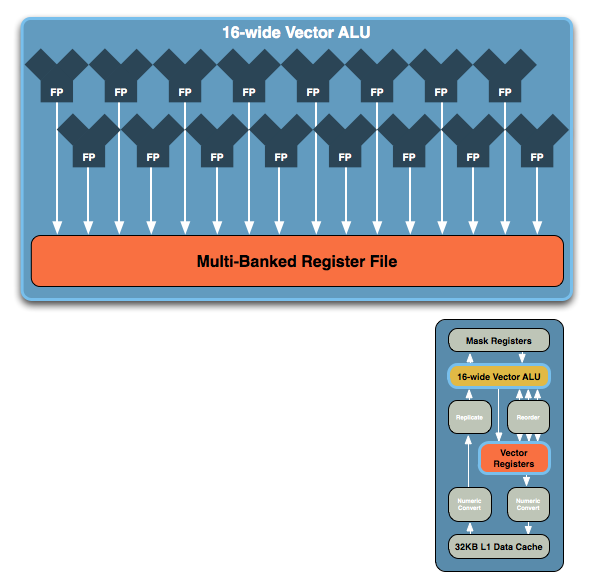

The vector unit is key and within that unit you've got a ton of registers and a very wide vector ALU, which leads us to the fundamental building block of Larrabee. NVIDIA's GT200 is built out of Streaming Processors, AMD's RV770 out of Stream Processing Units and Larrabee's performance comes from these 16-wide vector ALUs:

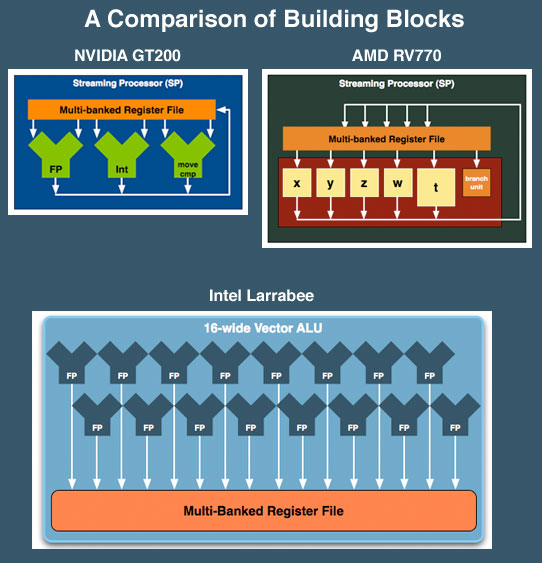

The vector ALU can behave as a 16-wide single precision ALU or an 8-wide double precision, although that doesn't necessarily translate into equivalent throughput (which Intel would not at this point clarify). Compared to ATI and NVIDIA, here's how Larrabee looks at a basic execution unit level:

NVIDIA's SPs work on a single operation, AMD's can work on five, and Larrabee's vector unit can work on sixteen. NVIDIA has a couple hundred of these SPs in its high end GPUs, AMD has 160 and Intel is expected to have anywhere from 16 - 32 of these cores in Larrabee. If NVIDIA is on the tons-of-simple-hardware end of the spectrum, Intel is on the exact opposite end of the scale.

We've already shown that AMD's architecture requires a lot of help from the compiler to properly schedule and maximize the utilization of its execution resources within one of its 5-wide SPs, with Larrabee the importance of the compiler is tremendous. Luckily for Larrabee, some of the best (if not the best) compilers are made by Intel. If anyone could get away with this sort of an architecture, it's Intel.

At the same time, while we don't have a full understanding of the details yet, we get the idea that Larrabee's vector unit is sort of a chameleon. From the information we have, these vector units could exectue atomic 16-wide ops for a single thread of a running program and can handle register swizzling across all 16 exectution units. This implies something very AMD like and wide. But it also looks like each of the 16 vector execution units, using the mask registers can branch independently (looking very much more like NVIDIA's solution).

We've already seen how AMD and NVIDIA architectural differences show distinct advantages and disadvantages against eachother in different games. If Intel is able to adapt the way the vector unit is used to suit specific situations, they could have something huge on their hands. Again, we don't have enough detail to tell what's going to happen, but things do look very interesting.

101 Comments

View All Comments

erikespo - Monday, August 4, 2008 - link

http://en.wikipedia.org/wiki/Square_%28geometry%29">http://en.wikipedia.org/wiki/Square_%28geometry%29helpful page to take you back to first grade

and excuse my decimal point.. it is 204.49mm total per core or 14.3mm^2

erikespo - Monday, August 4, 2008 - link

Explain.lets use smaller numbers for you 2mm^2 is 2mm by 2 mm or 4 total mm

double that and it is 4mm^2 or 4 mm by 4 mm or 16mm total..

we are talking about area or 2 dimensions not 1 dimension.

Same math applies to the article

MamiyaOtaru - Monday, August 4, 2008 - link

No, you're way off. 2mm² is TWO square millimeters. (a rectangle 1x2 for example). Double that would be 4mm², which could either be 1x4 or 2x2.NUMBERmm² doesn't mean NUMBERxNUMBER mm, it means exactly what it says: NUMBER mm².

Using your smaller numbers: 2mm² is not "4 total mm"; it is TWO mm². Saying it is 4 total mm doesn't even make sense. You _can't_ measure area in millimeters. You measure it in square millimeters, and there are two of them (_2_mm²).

Here's an mspaint visual (if links work: http://img105.imageshack.us/my.php?image=squaremma...">http://img105.imageshack.us/my.php?image=squaremma...

You're so sure you're right on this, it's really depressing :(

darkequitus - Monday, August 4, 2008 - link

I did not appriciate the writer creaming over every digital page they wrote. especially when Larrabee's performance is mainl at the moment based on INtel hype and nothing real.ZootyGray - Monday, August 4, 2008 - link

THANK YOU.Somebody finally said it.

The others prefer Eutopian illusion - aka the curse aka ntel antitrust. ntel has no grafx and the fools in the public buy "inside' and nvid and ati aren't exactly friends of the curse.

welcome to the matrix. wakey wakey

ZootyGray - Monday, August 4, 2008 - link

and a 16 pager on maybe might could be should be = wannabe "employ-boy"- payday ? hooyeh. This is so disappointing for me. Credibility sags to a new low.

strikeback03 - Tuesday, August 5, 2008 - link

Someone whose two posts contain about 10 complete words and no complete thoughts says Anandtech's credibility has sagged to a new low?ZootyGray - Tuesday, August 5, 2008 - link

haha yeh - lots of room for thinking.or - if no thinkeez - ya gots der 16 pg inundation (that's a big word like marmalade) all based on nothing-is-real - you like that kind of brainwash? we don't know anything; but here's the tekspex?

btw - did u get it? the matrix idea? watch the movie. cos here it is. pardon my loaded cryptic literacy.

thx

if you don't get it - well, that's what they want - a world of sleeping mob. never mind, that's just my concern.

The Preacher - Monday, August 4, 2008 - link

I don't really care about how good it will be executing some software renderer but I feel it is going to kick ass in scientific calculations. Matrix operations, FFT/convolution, tremendous bandwidth, double precission... I may write C++/x86 assembly code directly for it and I may put this into a rack of servers and use it through MPI. Give me a compiler with vector intrinsic functions for it and my dreams just came true! :)elerick - Monday, August 4, 2008 - link

I have been a daily reader of another hardware review site for years. I ready nearly every articles that headlines and find many of them quite lacking. Today I got wind of your review for the Larabee. It was very well written and produced an amazing amount of tech knowledge not really commonly reviewed. I'm glad to have found you this site, and I never create an account but today I felt obligated to. Great work.PS: any news on that AMD / Fusion? or is that just them being intimidated by Intel's Larrabee?