Intel's 32nm Update: The Follow-on to Core i7 and More

by Anand Lal Shimpi on February 11, 2009 12:00 AM EST- Posted in

- CPUs

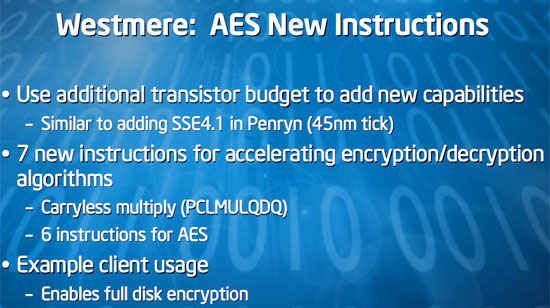

Westmere’s New Instructions

Much like Penryn and its new SSE4.1 instructions, Westmere comes with 7 new instructions added to those already in Core i7. These instructions are specifically focused on accelerating encryption/decryption algorithms. There’s a single carryless multiply instruction (PCLMULQDQ...I love typing that) and 6 instructions of AES.

Intel gives the example of hardware accelerated full disk encryption as a need for these instructions. With the new instructions being driven into the mainstream first, we’ll probably see quicker than usual software adoption.

Final Words

What is there to say other than: it’s a healthy roadmap. The only casualty I’ve seen is Havendale but I’d gladly trade Havendale for a 32nm version. But let’s get down to what this means for what you should buy and when.

At the very high end, Core i7 users have little reason to worry. While Intel is expected to bump i7 up to 3.33GHz in the near future, nothing below i7 looks threatening in 2009. Moving into 2010, the 6-core 32nm i7 successor should be extremely powerful. Intel’s strategy with LGA-1366 makes a lot of sense: if you want more cores, this is the platform you’re going to have to be on.

Now although I said that nothing will threaten Core i7 this year, you may be able to get i7-like performance out of Lynnfield in the second half. A quad-core Lynnfield running near 3GHz, should offer much of the performance of an i7 with a lower platform cost. Remember back to our original i7 review; we didn’t find a big performance benefit from three channels of DDR3 versus two.

Lynnfield is on track for a 2H 2009 introduction and if you’re unable to make the jump to i7 now, you’ll probably be able to get i7-like performance out of Lynnfield in about 6 months. Intel did mention that the most overclockable processors would go into the LGA-1366 socket. Combine better overclockability with the promise of 6 cores in the future and it seems like LGA-1366 is shaping up to be a platform that’s going to stick around despite cheaper alternatives.

The 32nm Clarkdale/Arrandale parts arriving by the end of this year really means one very important thing: the time to buy a new notebook will be either in Q4 2009 or Q1 2010. A 2-core, 4-thread 32nm Westmere derivative is not only going to put current Penryn cores to shame, it’s going to be extremely power efficient. In its briefing yesterday, Intel mentioned that while Clarkdale/Arrandale clock speeds and TDPs would be similar to what we have today, you’ll be getting much more performance. Seeing what we’ve seen thus far with Nehalem, I’d say a 2-core, 32nm version in a notebook is going to be reason enough for you to want to upgrade.

If I had to build a new desktop today I’d go Core i7 and think about upgrading to a 6-core version sometime next year. If I couldn’t or didn’t need to build today, then the thing to wait for is Lynnfield. Four cores that should deliver i7-like performance just can’t be beat, and platform costs will be much cheaper by then (expect ~$100 motherboards and near price parity between DDR3 and DDR2).

On the mainstream quad-core side, it may not make sense to try to upgrade to 32nm quad-core until Sandy Bridge at the end of 2010. If you buy Lynnfield this year, chances are that you won’t feel a need to upgrade until late 2010/2011.

On the notebook side, if I needed one today I’d buy whatever I could keeping in mind that within a year I’m going to want to upgrade. I mentioned this in much of my recent Mac coverage; if you bought a new MacBook, it looks great, but the one you’re going to really want will be here in about a year.

We owe Intel a huge thanks for being so forthcoming with its roadmaps. It’s going to be a good couple of years for performance.

64 Comments

View All Comments

blyndy - Wednesday, February 11, 2009 - link

Let me see if I've got this straight: in 2H'09 (I would actually bet Q3'09) we will finally see the Core i5 quads-cores (Lynnfield/Clarksfield) (on a new LGA-1156 socket), which should have been released in Dec'08.So the 45nm Core i5 quads will be the highest performing CPU available for LGA-1156, positioning above the 32nm Clarkdale/Arrandale dual-cores (the 'Core i5 Duo' maybe?) which arrive in Q4'09

How do they indent to make the LGA-1366 platform have better overclockability, i7 and i5 are almost the same, are they going to actively prevent OC'ing on i5? that would be ridiculous.

Somehow I don't think that the artificial socket segmentation will have a significant number of enthusiast herded into LGA-1366 to get the higher margin cash-cow that Intel has planned it to be.

Triple Omega - Sunday, February 15, 2009 - link

Intel isn't going to artificially limit overclocking directly, but it is indirectly by redirecting the better chips to 1366. So the i7 CPU's will be cherry-picked versions of the i5's and thus will overclock better. Besides that the only socket with Extreme versions will be 1366.(Though that is a niche within a niche really)philosofool - Wednesday, February 11, 2009 - link

Overclockers are a very small fraction of the market. I'm not even sure intel is thinking about overclockability when they engineer chips. Overclockability is more an artefact of good engineering than a design goal from the outset. Overclockers are always paranoid that intel or AMD is out to get them by intentionally crippling chips. There just aren't enough of us for Intel to be concerned. We're like 1% of the total CPU market.Pretty much every chip that intel has released at any price point since the introduction of Core 2 has been wonderfully overclockable. I wouldn't worry that Intel is going to change that soon, especially since Core i5 is basically just mainstream processor with the same design fundamentals as the excellent i7.

JonnyDough - Wednesday, February 11, 2009 - link

Although I understand it's a hobby, I don't care if people can overclock or not. As long as we have fast chips at a good price and they're faster than what we have...I mean, why would you care? Isn't it all about SPEED?ssj4Gogeta - Wednesday, February 11, 2009 - link

i5 probably won't have an extreme version.Bezado11 - Wednesday, February 11, 2009 - link

I wouldn't be surprised if the opposite was true. I'm really sick of all the hype on shrinking creates less heat. Look at the gpu industry, ever since they started shrinking things got hotter and hotter, and now it seems with i7 even though it's not a die shrink and we are use to 45nm by now, the new hardware to support minor changes in architecture of the cpu seem to make things run hotter.I7 is way to hot. The newest GPU's run way to hot.

Lightnix - Thursday, February 12, 2009 - link

But you're making an unfair comparison - for example, the current latest GPUs have only been produced on the newest nodes, ever. Now, if we take for example, a Radeon 3870 vs. a Radeon 2900 XT, the former draws far less power and will overclock better on air, almost directly as a result of them shrinking from a 80nm to a 55nm process, despite them performing exactly the same. Another example is the Core 2 E8000 series and E6000 series. Despite the increase in cache size, the E8000 dissipates little enough heat that they can provide them with a very tiny heatsink compared to the earlier 65nm cores, and objectively they draw much less power at the same clock speed because they run at lower volts.You can see this sort of thing again and again throughout the technology industry, Coppermine (180nm) -> Tualatin(130nm), GeForce 7800 -> 7900, G80 -> G92, etc., etc.

If you were to compare say, a GTX280 to a 8800 GTX and say the former draws much more power than the 8800 GTX, AND it's produced on a smaller process - well, yes, but that's because they've clocked it higher and there are far more transistors (twice as many, in fact).

Mr Perfect - Thursday, February 12, 2009 - link

That's because every time they shrink the chips they pack in new features and push the clock speed to the bleeding edge. If all they did was die shrink the old tech, we'd all be running something like an Atom CPU right now. Atoms closely resemble Pentium 3s, but on modern manufacturing only draw what? 5 watts?V3ctorPT - Wednesday, February 11, 2009 - link

The GPU's run hotter, because they pack double the transistors with a new shrink, than their previous HW... Reduction of the manufacturing process enables that we can have so much more transistors in the same place, of course it gets hot...JonnyDough - Wednesday, February 11, 2009 - link

I think you need to re-read the manufacturing roadmap page. It details the leakage gain (heat).