ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Latest CUDA App: MotionDSP’s vReveal

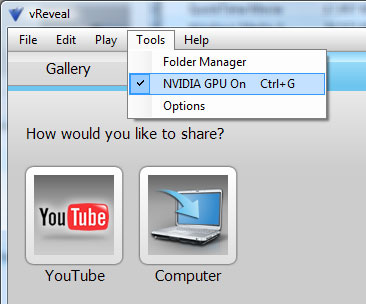

NVIDIA had more slides in its GTX 275 presentation about non-gaming applications than it did about how the 275 performed in games. One such application is MotionDSP’s vReveal - a CUDA enabled video post processing application than can clean up poorly recorded video.

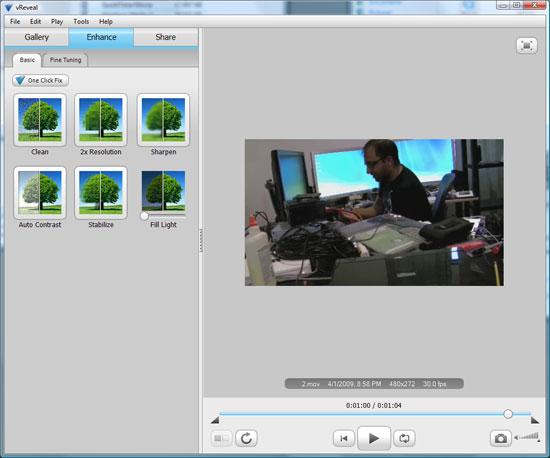

The application’s interface is simple:

Import your videos (anything with a supported codec on your system pretty much) and then select enhance.

You can auto-enhance with a single click (super useful) or even go in and tweak individual sliders and settings on your own in the advanced mode.

The changes you make to the video are visible on the fly, but the real time preview is faster on a NVIDIA GPU than if you rely on the CPU alone.

When you’re all done, simply hit save to disk and the video will be re-encoded with the proper changes. The encoding process takes place entirely on the GPU but it can also work on a CPU.

First let’s look at the end results. We took three videos, one recorded using Derek’s wife’s Blackberry and two from me on a Canon HD cam (but at low res) in my office.

I relied on vReveal’s auto tune to fix the videos and I’ve posted the originals and vReveal versions on YouTube. The videos are below:

In every single instance, the resulting video looks better. While it’s not quite the technology you see in shows like 24, it does make your videos look better and it does do it pretty quickly. There’s no real support for video editing here and I’m not familiar enough with the post processing software market to say whether or not there are better alternatives, but vReveal does do what it says it does. And it uses the GPU.

Performance is also very good on even a reasonably priced GPU. It took 51 seconds for the GeForce GTX 260 to save the first test video, it took my Dell Studio XPS 435’s Core i7 920 just over 3 minutes to do the same task.

It’s a neat application. It works as advertised, but it only works on NVIDIA hardware. Will it make me want to buy a NVIDIA GPU over an ATI one? Nope. If all things are equal (price, power and gaming performance) then perhaps. But if ATI provides a better gaming experience, I don’t believe it’s compelling enough.

First, the software isn’t free - it’s an added expense. Badaboom costs $30, vReveal costs $50. It’s not the most expensive software in the world, but it’s not free.

And secondly, what happens if your next GPU isn’t from NVIDIA? While vReveal will continue to work, you no longer get GPU acceleration. A vReveal-like app written in OpenCL will work on all three vendors’ hardware, as long as they support OpenCL.

If NVIDIA really wants to take care of its customers, it can start by giving away vReveal (and Badaboom) to people who purchase these high end graphics cards. If you want to add value, don’t tell users that they should want these things, give it to them. The burden of proof is on NVIDIA to show that these CUDA enabled applications are worth supporting rather than waiting for cross-vendor OpenCL versions.

Do you feel any differently?

294 Comments

View All Comments

evilsopure - Thursday, April 2, 2009 - link

Update: I guess Anand was making his updates while I was making my post, so the "marginal leader at this new price point of $250" line is gone and the Final Words actually now reflect my own personal conclusion above.Anand Lal Shimpi - Thursday, April 2, 2009 - link

I've updated the conclusion, we agree :)-A

SiliconDoc - Monday, April 6, 2009 - link

You agree now that NVidia has moved their driver to the 2650 rez to win, since for months on end, you WHINED about NVidia not winning at the highest rez, even though it took everyting lower.So of COURSE, now is the time to claim 2650 doesn't matter much, and suddenly ROOT for RED at lower resolutions.

It Nvidia screws you out of cards again, I certainly won't be surprised, because you definitely deserve it.

Thanks anyway for changing Derek's 6 month plus long mindset where only the highest resolution mattered, as he had been ranting and red raving how wonderful they were.

That is EXACTLY WHY his brain FARTED, and he declared NVidia the top dog - it's how he's been doing it for MONTHS.

So good job there, you BONEHEAD - you finally caught the bias, just when the red rooster cards FAILED at that resolution.

Look in the mirror - DUMMY - maybe you can figure it out.

7Enigma - Thursday, April 2, 2009 - link

Check the article again. Anand edited it and it is now very clear and concise.7Enigma - Thursday, April 2, 2009 - link

Bah, internet lag. Ya got there first.... :)sublifer - Thursday, April 2, 2009 - link

As I predicted elsewhere, they probably should have named this new card the GTX 281. In almost every single benchmark and resolution it beats the 280. In one case it even beat the 285 somehow./Gripe

That said, Go AMD! I wanna check other sites and see if they benched with the card highly over-clocked. One site got 950 core and 1150 memory easily but they didn't include it on the graphs :(

Anand Lal Shimpi - Thursday, April 2, 2009 - link

Hey guys, I just wanted to chime in with a few fixes:1) I believe Derek used the beta Catalyst driver that ATI gave us with the 4890, not the 8.12 hotfix. I updated the table to reflect this.

2) Power consumption data is now in the article as well, 2nd to last page.

3) I've also updated the conclusion to better reflect the data. What Derek was trying to say is that the GTX 275 vs. 4890 is more of a wash at 2560 x 1600, which it is. At lower than 2560 x 1600 resolutions, the 4890 is the clear winner, losing only a single test.

Thank you for all the responses :)

Take care,

Anand

7Enigma - Thursday, April 2, 2009 - link

Thank you Anand for the update and the article changes. I think that will quell most of the comments so far (mine included).Could you possibly comment on the temps posted earlier in the comments section? My question is whether there are significant changes with the fan/heatsink between the stock 4870 and the 4890. The idle and load temps of the 4890 are much lower, especially when the higher frequency is taken into consideration.

Also a request to describe the differences between the 4890 and the 4870 (several comments allude to a respin that would account for the higher clocks, lower temp, different die size).

Thank you again for all of your hard work (both of you).

Warren21 - Thursday, April 2, 2009 - link

Yeah, I would also second a closer comparison between RV790 and RV770, or at least mention it. It's got new power phases, different VRM (7-phase vs 5-phase respectively), slightly redesigned core (AT did mention this) and features a revised HS/F.VooDooAddict - Thursday, April 2, 2009 - link

I was very happy to see the PhysX details. I'd started worrying I might be missing out with my 4870. It's clear now that I'm not missing out on PhysX, but might be missing out on some great encoding performance wiht CUDA.I'll be looking forward to your SLI / Crossfire followup. Hoping to see some details about peformance with ultra high Anti-Aliasing that's only available with SLI/Crossfire. I used to run Two 4850s and enjoyed the high-end Edge Antialiasing. Unfortunetly the pair of 4850's were a too much heat in a tiny shuttle case so I had to switch out to a 4870.

Your review reinforced something that I'd been feeling about the 4800s. There isn't much to complain about when running 1920x1200 or lower with modest AA. They seem well positioned for most gamers out there. For those out there with 30" screens (or lusting after them, like myself)... while the GTX280/285 has a solid edge, one really needs SLI/Crossfire to drive 30" well.