ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Widespread Support Fallacy

NVIDIA acquired Ageia, they were the guys who wanted to sell you another card to put in your system to accelerate game physics - the PPU. That idea didn’t go over too well. For starters, no one wanted another *PU in their machine. And secondly, there were no compelling titles that required it. At best we saw mediocre games with mildly interesting physics support, or decent games with uninteresting physics enhancements.

Ageia’s true strength wasn’t in its PPU chip design, many companies could do that. What Ageia did that was quite smart was it acquired an up and coming game physics API, polished it up, and gave it away for free to developers. The physics engine was called PhysX.

Developers can use PhysX, for free, in their games. There are no strings attached, no licensing fees, nothing. Now if the developer wants support, there are fees of course but it’s a great way of cutting down development costs. The physics engine in a game is responsible for all modeling of newtonian forces within the game; the engine determines how objects collide, how gravity works, etc...

If developers wanted to, they could enable PPU accelerated physics in their games and do some cool effects. Very few developers wanted to because there was no real install base of Ageia cards and Ageia wasn’t large enough to convince the major players to do anything.

PhysX, being free, was of course widely adopted. When NVIDIA purchased Ageia what they really bought was the PhysX business.

NVIDIA continued offering PhysX for free, but it killed off the PPU business. Instead, NVIDIA worked to port PhysX to CUDA so that it could run on its GPUs. The same catch 22 from before existed: developers didn’t have to include GPU accelerated physics and most don’t because they don’t like alienating their non-NVIDIA users. It’s all about hitting the largest audience and not everyone can run GPU accelerated PhysX, so most developers don’t use that aspect of the engine.

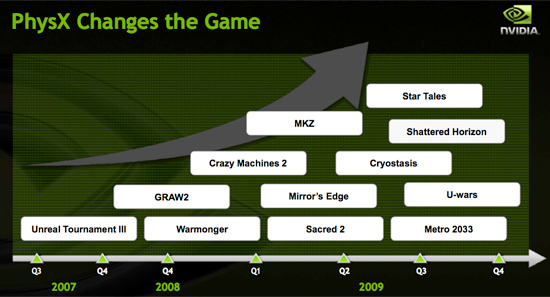

Then we have NVIDIA publishing slides like this:

Indeed, PhysX is one of the world’s most popular physics APIs - but that does not mean that developers choose to accelerate PhysX on the GPU. Most don’t. The next slide paints a clearer picture:

These are the biggest titles NVIDIA has with GPU accelerated PhysX support today. That’s 12 titles, three of which are big ones, most of the rest, well, I won’t go there.

A free physics API is great, and all indicators point to PhysX being liked by developers.

The next several slides in NVIDIA’s presentation go into detail about how GPU accelerated PhysX is used in these titles and how poorly ATI performs when GPU accelerated PhysX is enabled (because ATI can’t run CUDA code on its GPUs, the GPU-friendly code must run on the CPU instead).

We normally hold manufacturers accountable to their performance claims, well it was about time we did something about these other claims - shall we?

Our goal was simple: we wanted to know if GPU accelerated PhysX effects in these titles was useful. And if it was, would it be enough to make us pick a NVIDIA GPU over an ATI one if the ATI GPU was faster.

To accomplish this I had to bring in an outsider. Someone who hadn’t been subjected to the same NVIDIA marketing that Derek and I had. I wanted someone impartial.

Meet Ben:

I met Ben in middle school and we’ve been friends ever since. He’s a gamer of the truest form. He generally just wants to come over to my office and game while I work. The relationship is rarely harmful; I have access to lots of hardware (both PC and console) and games, and he likes to play them. He plays while I work and isn't very distracting (except when he's hungry).

These past few weeks I’ve been far too busy for even Ben’s quiet gaming in the office. First there were SSDs, then GDC and then this article. But when I needed someone to play a bunch of games and tell me if he noticed GPU accelerated PhysX, Ben was the right guy for the job.

I grabbed a Dell Studio XPS I’d been working on for a while. It’s a good little system, the first sub-$1000 Core i7 machine in fact ($799 gets you a Core i7-920 and 3GB of memory). It performs similarly to my Core i7 testbeds so if you’re looking to jump on the i7 bandwagon but don’t feel like building a machine, the Dell is an alternative.

I also setup its bigger brother, the Studio XPS 435. Personally I prefer this machine, it’s larger than the regular Studio XPS, albeit more expensive. The larger chassis makes working inside the case and upgrading the graphics card a bit more pleasant.

My machine of choice, I couldn't let Ben have the faster computer.

Both of these systems shipped with ATI graphics, obviously that wasn’t going to work. I decided to pick midrange cards to work with: a GeForce GTS 250 and a GeForce GTX 260.

294 Comments

View All Comments

piesquared - Thursday, April 2, 2009 - link

Must be tough trying to write a balanced review when you clearly favour one side of the equation. Seriously, you tow NV's line without hesitation, including soon to be extinct physx, a reviewer relieased card, and unreleased drivers at the time of your review. And here's the kicker; you ignore the OC potential of AMD's new card, which as you know, is one of it's major selling points.Could you possibly bend over any further for NV? Obviously you are perfectly willing to do so. F'n frauds

Chlorus - Friday, April 3, 2009 - link

What?! Did you even read the article? They specifically say they cannot really endorse PhysX or CUDA and note the lack of support in any games. I think you're the one towing a line here.SiliconDoc - Monday, April 6, 2009 - link

The red fanboys have to chime in with insanities so the reviewers can claim they're fair because "both sides complain".Yes, red rooster whiner never read the article, because if he had he would remember the line that neither overclocked well, and that overclocking would come in a future review ( in other words, they were rushed again, or got a chum card and knew it - whatever ).

So, they didn't ignore it , they failed on execution - and delayed it for later, so they say.

Yeah, red rooster boy didn't read.

tamalero - Thursday, April 9, 2009 - link

jesus dude, you have a strong persecution complex right?its like "ohh noes, they're going against my beloved nvidia, I MUST STOP THEM AT ALL COSTS".

I wonder how much nvidia pays you? ( if not, you're sad.. )

SiliconDoc - Thursday, April 23, 2009 - link

That's interesting, not a single counterpoint, just two whining personal attacks.Better luck next time - keep flapping those red rooster wings.

(You don't have any decent couinterpoints to the truth, do you flapper ? )

Sometimes things are so out of hand someone has to say it - I'm still waiting for the logical rebuttals - but you don't have any, neither does anyone else.

aguilpa1 - Thursday, April 2, 2009 - link

All these guys talking about how irrelevant physx and how not so many games use it don't get it. The power of physx is bringing the full strength of those GPU's to bear on everyday apps like CS4 or Badaboom video encoding. I used to think it was kind of gimmicky myself until I bought the "very" inexpensive badaboom encoder and wow, how awesome was that! I forgot all about the games.Rhino2 - Monday, April 13, 2009 - link

You forgot all about gaming because you can encode video faster? I guess we are just 2 different people. I don't think I've ever needed to encode a video for my ipod in 60 seconds or less, but I do play a lot of games.z3R0C00L - Thursday, April 2, 2009 - link

You're talking about CUDA not Physx.Physx is useless as HavokFX will replace it as a standard through OpenCL.

sbuckler - Thursday, April 2, 2009 - link

No physx has the market, HavokFX is currently demoing what physx did 2 years ago.What will happen is the moment HavokFX becomes anything approaching a threat nvidia will port Physx to OpenCL and kill it.

As far as ATI users are concerned the end result is the same - you'll be able to use physics acceleration on your card.

z3R0C00L - Thursday, April 2, 2009 - link

You do realize that Havok Physics are used in more games than Physx right (including all the source engine based games)?And that Diablo 3 makes use of Havok Physics right? Just thought I'd mention that to give you time to change your conclusion.