ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The Latest CUDA App: MotionDSP’s vReveal

NVIDIA had more slides in its GTX 275 presentation about non-gaming applications than it did about how the 275 performed in games. One such application is MotionDSP’s vReveal - a CUDA enabled video post processing application than can clean up poorly recorded video.

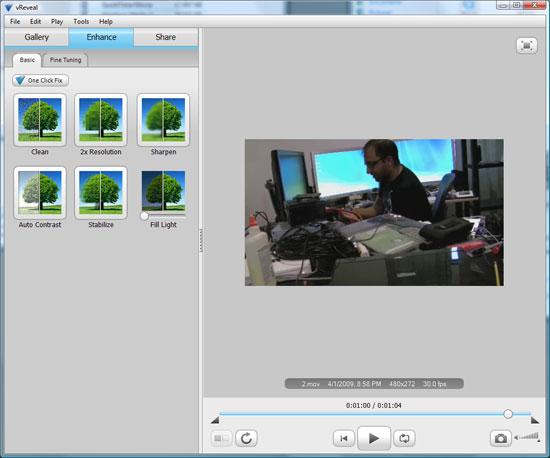

The application’s interface is simple:

Import your videos (anything with a supported codec on your system pretty much) and then select enhance.

You can auto-enhance with a single click (super useful) or even go in and tweak individual sliders and settings on your own in the advanced mode.

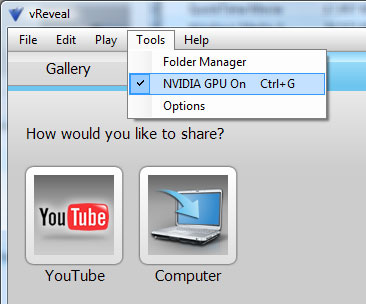

The changes you make to the video are visible on the fly, but the real time preview is faster on a NVIDIA GPU than if you rely on the CPU alone.

When you’re all done, simply hit save to disk and the video will be re-encoded with the proper changes. The encoding process takes place entirely on the GPU but it can also work on a CPU.

First let’s look at the end results. We took three videos, one recorded using Derek’s wife’s Blackberry and two from me on a Canon HD cam (but at low res) in my office.

I relied on vReveal’s auto tune to fix the videos and I’ve posted the originals and vReveal versions on YouTube. The videos are below:

In every single instance, the resulting video looks better. While it’s not quite the technology you see in shows like 24, it does make your videos look better and it does do it pretty quickly. There’s no real support for video editing here and I’m not familiar enough with the post processing software market to say whether or not there are better alternatives, but vReveal does do what it says it does. And it uses the GPU.

Performance is also very good on even a reasonably priced GPU. It took 51 seconds for the GeForce GTX 260 to save the first test video, it took my Dell Studio XPS 435’s Core i7 920 just over 3 minutes to do the same task.

It’s a neat application. It works as advertised, but it only works on NVIDIA hardware. Will it make me want to buy a NVIDIA GPU over an ATI one? Nope. If all things are equal (price, power and gaming performance) then perhaps. But if ATI provides a better gaming experience, I don’t believe it’s compelling enough.

First, the software isn’t free - it’s an added expense. Badaboom costs $30, vReveal costs $50. It’s not the most expensive software in the world, but it’s not free.

And secondly, what happens if your next GPU isn’t from NVIDIA? While vReveal will continue to work, you no longer get GPU acceleration. A vReveal-like app written in OpenCL will work on all three vendors’ hardware, as long as they support OpenCL.

If NVIDIA really wants to take care of its customers, it can start by giving away vReveal (and Badaboom) to people who purchase these high end graphics cards. If you want to add value, don’t tell users that they should want these things, give it to them. The burden of proof is on NVIDIA to show that these CUDA enabled applications are worth supporting rather than waiting for cross-vendor OpenCL versions.

Do you feel any differently?

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

Oh great, a whole other sku to lose another billion a year with. Wonderful. Any word on the new costs of the bigger cpu and expensive capacitors and vrm upgrades ?Ahh, nevermind, heck, this ain't a green greedy monster card, screw it if they lose their shirts making it - I mean there's no fantasy satisfaction there.

Get back to me on the nvidia costs - so I can really dream about them losing money.

itbj2 - Thursday, April 2, 2009 - link

I am not sure about you guys but NVIDIA has problems with their drivers as well. I have a 9400GT and a 8800 GTS in my machine and the new drivers can't make the two work well enough for my computer to come out of hibernation with out Windows XP crashing every so often. This use to work just fine before I upgraded the drivers to the latest version.FishTankX - Thursday, April 2, 2009 - link

For anyone who REALLY wants temp data..Firingsquad 4890/GTX275 review

http://www.firingsquad.com/hardware/ati_radeon_489...">http://www.firingsquad.com/hardware/ati...4890_nvi...

Idle

GTX 260 216 (45C)

GTX 285 (46C)

GTX 275 (47C)

4890 1GB (51C)

4870 (60C)

Load

4890 1GB (64C)

GTX 260 216 (64C)

GTX 275 (68C)

GTX 285 (70C)

4870 1GB (80C)

Power consumption

(Total system power)

Idle

GTX 275 (143W)

4890 (172W)

Load

4890 (276W)

GTX 275 (279W)

There, now you can can it! :D

SiliconDoc - Monday, April 6, 2009 - link

There it is again, 30 watts less idle for nvidia, and only 3 watts more in 3d. NVIDIA WINS - that's why they left it out - they just couldn't HANDLE it....So, if you're 3d gaming 91% of the time, and only 2d surfing 9% of the time, the ati card comes in at equal power useage...

Otherwise, it LOSES - again.

I doubt the red raging reviewers can even say it. Oh well, thanks for posting numbers.

7Enigma - Thursday, April 2, 2009 - link

Can anyone confirm whether or not the heatsink/fan has been altered between the 4870 and the 4890? I'm interested to know if the decreased temps of the higher clocked 4890 are due in part to a better cooling mechanism, or strictly from a respin/binning.Warren21 - Thursday, April 2, 2009 - link

Yes, the cooler has been slightly revised. I believe it's a combination of both. I'll admit I'm a bit disappointed AT didn't explore the differences between the HD 4870 and the 4890 more in-depth.Comparisson:

http://www.hardwarecanucks.com/forum/hardware-canu...">http://www.hardwarecanucks.com/forum/ha...phire-ra...

bill3 - Thursday, April 2, 2009 - link

"It looks like NVIDIA might be the marginal leader at this new price point of $250." you wroteBut looking at your own benches..

Since you run 3 resolutions of your benches, lets reasonably declare that the card that can win 2 or more of them "wins" that game. In that case 4890 wins over 275 in: COD WaW, Warhead, Fallout 3, Far Cry 2, GRID, and Left 4 Dead. 275 wins over 4890 in Age of Conan. Either with AA or without the results stay the same.

The only way I think you can contend 275 has an edge is if you place a premium on the 2560X1600 results, where it seems to edge out the 4890 more often. However, it's often at unplayable framerates. Further I dont see a reason to place undue importance on the 2560X benches, the majority of people still game on 1680X1050 monitors, and as you yourself noted, Nvidia released a new driver that trades off performance at low res for high res, which I think is arguably neither here nor their, not a clear advantage at all.

Even at 2560 (using the AA bar graphs because its often difficult to spot the winner at 2560 on the line graphs), where the 275 wins 5 and loses 2, the margins are often so ridiculously close it essentially a tie. 275 takes AOC, COD WaW, and L4D by a reasonable margin at the highest res, while the 4890 wins Fallout3 and GRID comfortably. Warhead and Far Cry 2 are within .7 FPS although nominally wins for 275. Thats a difference of all of 3-2 in materially relevant wins, or exactly 1 game. But keep in mind again that 4890 is fairly clearly winning the lower reses more often, and to me it's wrong to state 275 has the edge.

SiliconDoc - Monday, April 6, 2009 - link

The funny thing is, if you're in those games and constantly looking at your 5-10 fps difference at 50-60-100-200 fps - there's definitely something wrong with you.I find reviews that show LOWEST framerate during game when it's a very high resolution and a demanding game useful - usually more useful when the playable rate is hovering around 30 or below 50 (and dips a ways below 30.

Otherwise, you'd have to be an IDIOT to base your decision on the very often, way over playable framerates in the near equally matched cards. WE HAVE A LOT OF IDIOTS HERE.

Then comes the next conclusion, or the follow on. Since framerates are at playable, and are within 10% at the top end, the things that really matter are : game drivers / stability , profiles , clarity, added features, added box contents (a free game one wants perhaps).

Almost ALWAYS, Nvidia wins that - with the very things this site continues to claim simply do not matter, and should not matter - to ANYONE they claim - in effect.

I think it's one big fat lie, and they most certainly SHOULD know it.

Note now, that NVidia - having released their, according to this site, high resolution driver tweak for 2560xX , wins at that resolution, the review calmly states it does'nt matter much, most people don't play at that resolution - and recommend ati now instead.

Whereas just prior, for MONTHS on end, when ati won only the top resolution, and NVidia took the others, this same site could not stop ranting and raving that ATI won it all and was the only buy that made sense.

It's REALLY SICK.

I pointed out their 30" monitor for ATI bias months ago, and they continued with it - but now they agree with me - when ATI loses at that rezz... LOL

Yeah, they're schesiters. Ain't no doubt about it.

Others notice as well - and are saying things now.

I see Jarred is their damage control agent.

JonnyDough - Thursday, April 2, 2009 - link

Why not just use RivaTuner or ATI Tool to underclock OC'd cards?Jamahl - Thursday, April 2, 2009 - link

How can the conclusion be that the 275 is the leader at the price point? The benchmarks are clearly in favour of the 4890 apart from the extreme end 2560x1600.