AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

DirectX11 Redux

With the launch of the 5800 series, AMD is quite proud of the position they’re in. They have a DX11 card launching a month before DX11 is dropped on to consumers in the form of Win7, and the slower timing of NVIDIA means that AMD has had silicon ready far sooner. This puts AMD in the position of Cypress being the de facto hardware implementation of DX11, a situation that is helpful for the company in the long term as game development will need to begin on solely their hardware (and programmed against AMD’s advantages and quirks) until such a time that NVIDIA’s hardware is ready. This is not a position that AMD has enjoyed since 2002 with the Radeon 9700 and DirectX 9.0, as DirectX 10 was anchored by NVIDIA due in large part to AMD’s late hardware.

As we have already covered DirectX 11 in-depth with our first look at the standard nearly a year ago, this is going to be a recap of what DX11 is bringing to the table. If you’d like to get the entire inside story, please see our in-depth DirectX 11 article.

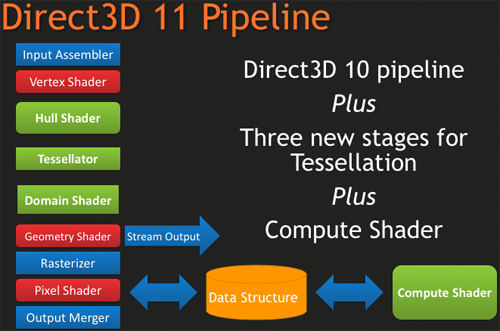

DirectX 11, as we have previously mentioned, is a pure superset of DirectX 10. Rather than being the massive overhaul of DirectX that DX10 was compared to DX9, DX11 builds off of DX10 without throwing away the old ways. The result of this is easy to see in the hardware of the 5870, where as features were added to the Direct3D pipeline, they were added to the RV770 pipeline in its transformation into Cypress.

New to the Direct3D pipeline for DirectX 11 is the tessellation system, which is divided up into 3 parts, and the Computer Shader. Starting at the very top of the tessellation stack, we have the Hull Shader. The Hull Shader is responsible for taking in patches and control points (tessellation directions), to prepare a piece of geometry to be tessellated.

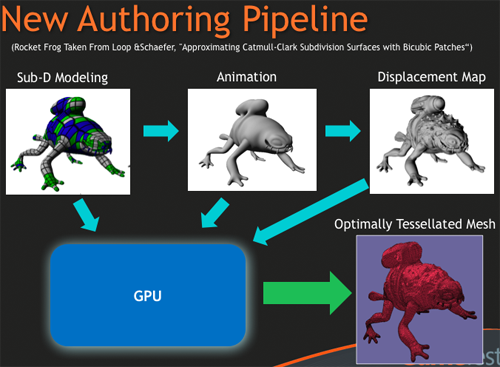

Next up is the tesselator proper, which is a rather significant piece of fixed function hardware. The tesselator’s sole job is to take geometry and to break it up into more complex portions, in effect creating additional geometric detail from where there was none. As setting up geometry at the start of the graphics pipeline is comparatively expensive, this is a very cool hack to get more geometric detail out of an object without the need to fully deal with what amounts to “eye candy” polygons.

As the tesselator is not programmable, it simply tessellates whatever it is fed. This is what makes the Hull Shader so important, as it’s serves as the programmable input side of the tesselator.

Once the tesselator is done, it hands its work off to the Domain Shader, along with the Hull Shader handing off its original inputs to the Domain Shader too. The Domain Shader is responsible for any further manipulations of the tessellated data that need to be made such as applying displacement maps, before passing it along to other parts of the GPU.

The tesselator is very much AMD’s baby in DX11. They’ve been playing with tesselators as early as 2001, only for them to never gain traction on the PC. The tesselator has seen use in the Xbox 360 where the AMD-designed Xenos GPU has one (albeit much simpler than DX11’s), but when that same tesselator was brought over and put in the R600 and successive hardware, it was never used since it was not a part of the DirectX standard. Now that tessellation is finally part of that standard, we should expect to see it picked up and used by a large number of developers. For AMD, it’s vindication for all the work they’ve put into tessellation over the years.

The other big addition to the Direct3D pipeline is the Compute Shader, which allows for programs to access the hardware of a GPU and treat it like a regular data processor rather than a graphical rendering processor. The Compute Shader is open for use by games and non-games alike, although when it’s used outside of the Direct3D pipeline it’s usually referred to as DirectCompute rather than the Compute Shader.

For its use in games, the big thing AMD is pushing right now is Order Independent Transparency, which uses the Compute Shader to sort transparent textures in a single pass so that they are rendered in the correct order. This isn’t something that was previously impossible using other methods (e.g. pixel shaders), but using the Compute Shader is much faster.

Other features finding their way into Direct3D include some significant changes for textures, in the name of improving image quality. Texture sizes are being bumped up to 16K x 16K (that’s a 256MP texture) which for all practical purposes means that textures can be of an unlimited size given that you’ll run out of video memory before being able to utilize such a large texture.

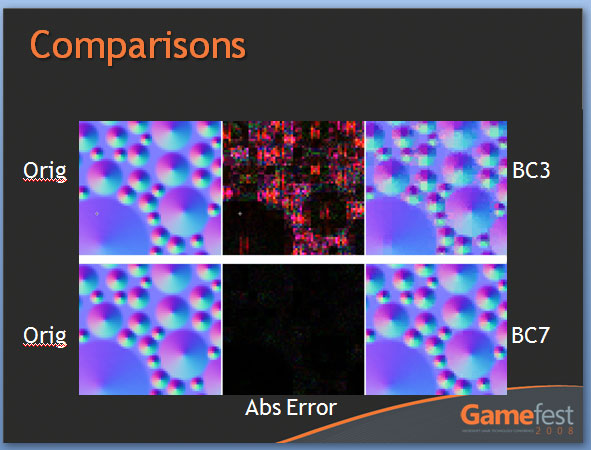

The other change to textures is the addition of two new texture compression schemes, BC6H and BC7. These new texture compression schemes are another one of AMD’s pet projects, as they are the ones to develop them and push for their inclusion in DX11. BC6H is the first texture compression method dedicated for use in compressing HDR textures, which previously compressed very poorly using even less-lossy schemes like BC3/DXT5. It can compress textures at a lossy 6:1 ratio. Meanwhile BC7 is for use with regular textures, and is billed as a replacement for BC3/DXT5. It has the same 3:1 compression ratio for RGB textures.

We’re actually rather excited about these new texture compression schemes, as better ways to compress textures directly leads to better texture quality. Compressing HDR textures allows for larger/better textures due to the space saved, and using BC7 in place of BC3 is an outright quality improvement in the same amount of space, given an appropriate texture. Better compression and tessellation stand to be the biggest benefactors towards improving the base image quality of games by leading to better textures and better geometry.

We had been hoping to supply some examples of these new texture compression methods in action with real textures, but we have not been able to secure the necessary samples in time. In the meantime we have Microsoft’s examples from GameFest 2008, which drive the point home well enough in spite of being synthetic.

Moving beyond the Direct3D pipeline, the next big feature coming in DirectX 11 is better support for multithreading. By allowing multiple threads to simultaneously create resources, manage states, and issue draw commands, it will no longer be necessary to have a single thread do all of this heavy lifting. As this is an optimization focused on better utilizing the CPU, it stands that graphics performance in GPU-limited situations stands to gain little. Rather this is going to help the CPU in CPU-limited situations better utilize the graphics hardware. Technically this feature does not require DX11 hardware support (it’s a high-level construct available for use with DX10/10.1 cards too) but it’s still a significant technology being introduced with DX11.

Last but not least, DX11 is bringing with it High Level Shader Language 5.0, which in turn is bringing several new instructions that are primarily focused on speeding up common tasks, and some new features that make it more C-like. Classes and interfaces will make an appearance here, which will make shader code development easier by allowing for easier segmentation of code. This will go hand-in-hand with dynamic shader linkage, which helps to clean up code by only linking in shader code suitable for the target device, taking the management of that task out of the hands of the coder.

327 Comments

View All Comments

RubberJohnny - Thursday, September 24, 2009 - link

Well silicondoc you sure have some hatred for ATI/love for nvidia.It's almost as if you work for the green team...

You seem to have all this time on your hands to go around the net looking for links to spread FUD...sitting on new egg watching these cards come in and out of stock like you have a vested interest in seeing ATI fail...unlike any sane person it appears you want nvidia to have a monopoly on the industry?

Maybe you are privy to some inside info over at nvidia and know they have nothing to counter the 5870 with?

Maybe the cash they paid you to spin these BS comments would have been better spent on R&D?

SiliconDoc - Thursday, September 24, 2009 - link

That's a nice personal, grating, insulting ripppp, it's almost funny, too.---

The real problems remain.

I bring up this stuff because of course, no one else will, it is almost forbidden. Telling the truth shouldn't be that hard, and calling it fairly and honestly should not be such a burden.

I will gladly take correction when one of you noticing insulters has any to offer. Of course, that never comes.

Break some new ground, won't you ?

I don't think you will, nor do I think anyone else will - once again, that simply confirms my factual points.

I guess I'll give you a point for complaining about delivery, if that's what you were doing, but frankly, there are a lot of complainers here no different - let's take for instance the ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275 article here.

http://www.anandtech.com/video/showdoc.aspx?i=3539">http://www.anandtech.com/video/showdoc.aspx?i=3539

Boy, the red fans went into rip mode, and Anand came in and changed the articles (Derek's) words and hence "result", from GTX275 wins to ATI4890 wins.

--

No, it's not just me, it's just the bias here consistently leans to ati, and wether it's rooting for the underdog that causes it, or the brooding undercurrent hatred that surfaces for "the bigshot" "greedy" "ripoff artist" "nvidia overchargers" "industry controlling and bribing" "profit demon" Nvidia, who knows...

I'm just not afraid to point it out, since it's so sickening, yes, probably just to me, "I'm sure".

How about this glaring one I have never pointed out even to this day, but will now:

ATI is ALWAYS listed first, or "on top" - and of course, NVIDIA, second, and it is no doubt, in the "reviewer's minds" because of "the alphabet", and "here we go in alphabetical order".

A very, very convenient excuse, that quite easily causes a perception bias, that is quite marked for the readers.

But, that's ok.

---

So, you want to tell me why I shouldn't laugh out loud when ATI uses NVIDIA cards to develope their "PhysX" competition Bullet ?

ROFLMAO

I have heard 100 times here (from guess whom) that the ati has the wanted "new technology", so will that same refrain come when NVIDIA introduces their never before done MIMD capable cores in a few months ? LOL

I can hardly wait to see the "new technology" wannabes proclaiming their switched fealty.

Gee sorry for noticing such things, I guess I should be a mind numbed zombie babbling along with the PC required fanning for ati ?

silverblue - Thursday, September 24, 2009 - link

No; if he did work for nVidia, he'd be far better informed and far less prone to using the phrase "red rooster" every five seconds.crackshot91 - Wednesday, September 23, 2009 - link

Any possibility of benchmarks with a core 2 duo?I wanna know if it will be necessary to upgrade to an i5 or i7 (All new mobo) to see big performance gains over my 8800GT. Will a C2D E6750 @ 3.2GHz bottleneck it?

Ryan Smith - Wednesday, September 23, 2009 - link

Our recent Core i7 860 article should do an adequate job of answering that question. Several of the benchmarks were taken right out of this article.therealnickdanger - Wednesday, September 23, 2009 - link

You dedicated a full page to the flawless performance of its A/V output, but didn't mention it in the "features" part of the conclusion. It's a very powerful feature, IMO. Granted, this card may be a tad too hot and loud to find a home in a lot of HTPCs, but it's still an awesome feature and you should probably append your conclusion... just a suggestion though.Ultimately, I have to admit to being a little disappointed by the performance of this card. All the Eyefinity hype and playable framerates at massive 7000x3000 resolutions led me to believe that this single card would scale down and simply dominate everything at the 30" level and below. It just seems logical, so I was taken aback when it was beat by, well, anything else. I expected the 5870 and 5870CF to be at the top of every chart. Oh well.

Awesome article though! I'm sure there's a 5850 in my future!

MrMom - Wednesday, September 23, 2009 - link

Does anyone have a good explanation why the massive HD5870 is still slower/@par with the GTX295?Thanks

SiliconDoc - Thursday, September 24, 2009 - link

Yes, because the ati core "really sucks". It needs DDR5, and much higher MHZ to compete with Nvidia, and their what, over 1 year old core. LOL Even their own 4870x2.Or the 3 year old G92 vs the ddr3 "4850" the "topcore" before yesterday. (the ati topcore minus the well done 3m mhz+ REBRAND ring around the 4890)

That's the sad, actual truth. That's the truth many cannot bear to bring themselves to realize, and it's going to get WORSE for them very soon, with nvidia's next release, with ddr5, a 512 bit bus, and the NEW TECHNOLOGY BY NVIDIA THAT ATI DOES NOT HAVE MIMD capable cores.

Oh, I can hardly wait, but you bet I'm going to wait, you can count on that 100%.

Spoelie - Thursday, September 24, 2009 - link

because those are 2 480mm² dies, while this is only 1 360mm² die?Griswold - Wednesday, September 23, 2009 - link

Its one GPU instead of two, maybe?