AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

More GDDR5 Technologies: Memory Error Detection & Temperature Compensation

As we previously mentioned, for Cypress AMD’s memory controllers have implemented a greater part of the GDDR5 specification. Beyond gaining the ability to use GDDR5’s power saving abilities, AMD has also been working on implementing features to allow their cards to reach higher memory clock speeds. Chief among these is support for GDDR5’s error detection capabilities.

One of the biggest problems in using a high-speed memory device like GDDR5 is that it requires a bus that’s both fast and fairly wide - properties that generally run counter to each other in designing a device bus. A single GDDR5 memory chip on the 5870 needs to connect to a bus that’s 32 bits wide and runs at base speed of 1.2GHz, which requires a bus that can meeting exceedingly precise tolerances. Adding to the challenge is that for a card like the 5870 with a 256-bit total memory bus, eight of these buses will be required, leading to more noise from adjoining buses and less room to work in.

Because of the difficulty in building such a bus, the memory bus has become the weak point for video cards using GDDR5. The GPU’s memory controller can do more and the memory chips themselves can do more, but the bus can’t keep up.

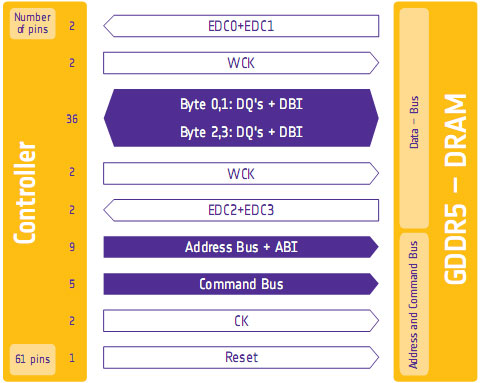

To combat this, GDDR5 memory controllers can perform basic error detection on both reads and writes by implementing a CRC-8 hash function. With this feature enabled, for each 64-bit data burst an 8-bit cyclic redundancy check hash (CRC-8) is transmitted via a set of four dedicated EDC pins. This CRC is then used to check the contents of the data burst, to determine whether any errors were introduced into the data burst during transmission.

The specific CRC function used in GDDR5 can detect 1-bit and 2-bit errors with 100% accuracy, with that accuracy falling with additional erroneous bits. This is due to the fact that the CRC function used can generate collisions, which means that the CRC of an erroneous data burst could match the proper CRC in an unlikely situation. But as the odds decrease for additional errors, the vast majority of errors should be limited to 1-bit and 2-bit errors.

Should an error be found, the GDDR5 controller will request a retransmission of the faulty data burst, and it will keep doing this until the data burst finally goes through correctly. A retransmission request is also used to re-train the GDDR5 link (once again taking advantage of fast link re-training) to correct any potential link problems brought about by changing environmental conditions. Note that this does not involve changing the clock speed of the GDDR5 (i.e. it does not step down in speed); rather it’s merely reinitializing the link. If the errors are due the bus being outright unable to perfectly handle the requested clock speed, errors will continue to happen and be caught. Keep this in mind as it will be important when we get to overclocking.

Finally, we should also note that this error detection scheme is only for detecting bus errors. Errors in the GDDR5 memory modules or errors in the memory controller will not be detected, so it’s still possible to end up with bad data should either of those two devices malfunction. By the same token this is solely a detection scheme, so there are no error correction abilities. The only way to correct a transmission error is to keep trying until the bus gets it right.

Now in spite of the difficulties in building and operating such a high speed bus, error detection is not necessary for its operation. As AMD was quick to point out to us, cards still need to ship defect-free and not produce any errors. Or in other words, the error detection mechanism is a failsafe mechanism rather than a tool specifically to attain higher memory speeds. Memory supplier Qimonda’s own whitepaper on GDDR5 pitches error correction as a necessary precaution due to the increasing amount of code stored in graphics memory, where a failure can lead to a crash rather than just a bad pixel.

In any case, for normal use the ramifications of using GDDR5’s error detection capabilities should be non-existent. In practice, this is going to lead to more stable cards since memory bus errors have been eliminated, but we don’t know to what degree. The full use of the system to retransmit a data burst would itself be a catch-22 after all – it means an error has occurred when it shouldn’t have.

Like the changes to VRM monitoring, the significant ramifications of this will be felt with overclocking. Overclocking attempts that previously would push the bus too hard and lead to errors now will no longer do so, making higher overclocks possible. However this is a bit of an illusion as retransmissions reduce performance. The scenario laid out to us by AMD is that overclockers who have reached the limits of their card’s memory bus will now see the impact of this as a drop in performance due to retransmissions, rather than crashing or graphical corruption. This means assessing an overclock will require monitoring the performance of a card, along with continuing to look for traditional signs as those will still indicate problems in memory chips and the memory controller itself.

Ideally there would be a more absolute and expedient way to check for errors than looking at overall performance, but at this time AMD doesn’t have a way to deliver error notices. Maybe in the future they will?

Wrapping things up, we have previously discussed fast link re-training as a tool to allow AMD to clock down GDDR5 during idle periods, and as part of a failsafe method to be used with error detection. However it also serves as a tool to enable higher memory speeds through its use in temperature compensation.

Once again due to the high speeds of GDDR5, it’s more sensitive to memory chip temperatures than previous memory technologies were. Under normal circumstances this sensitivity would limit memory speeds, as temperature swings would change the performance of the memory chips enough to make it difficult to maintain a stable link with the memory controller. By monitoring the temperature of the chips and re-training the link when there are significant shifts in temperature, higher memory speeds are made possible by preventing link failures.

And while temperature compensation may not sound complex, that doesn’t mean it’s not important. As we have mentioned a few times now, the biggest bottleneck in memory performance is the bus. The memory chips can go faster; it’s the bus that can’t. So anything that can help maintain a link along these fragile buses becomes an important tool in achieving higher memory speeds.

327 Comments

View All Comments

Ryan Smith - Wednesday, September 23, 2009 - link

We do have Cyberlink's software, but as it uses different code paths, the results are near-useless for a hardware review. Any differences could be the result of hardware differences, or it could be that one of the code paths is better optimized. We would never be able to tell.Our focus will always be on benchmarking the same software on all hardware products. This is why we bent over backwards to get something that can use DirectCompute, as it's a standard API that removes code paths/optimizations from the equation (in this case we didn't do much better since it was a NVIDIA tech demo, but it's still an improvement).

DukeN - Wednesday, September 23, 2009 - link

I have one of these and I know it outperforms the GTX 280 but not sure what it'd be like against one of these puppies.dagamer34 - Wednesday, September 23, 2009 - link

I need my bitstream Dolby Digital TrueHD/DTS HD Master Audio bistreaming codecs!!! :)ew915 - Wednesday, September 23, 2009 - link

I don't see this beating the GT300 as for so it should beat the GTX295 by a great margin.tamalero - Wednesday, September 23, 2009 - link

dood, you forgot the 295 is a DUAL CHIP?SiliconDoc - Wednesday, September 23, 2009 - link

roflmao - Gee no more screaming the 4850x2 and the 4870x2 are best without pointing out the two gpu's needed to get there.--

Nonetheless, this 5870 is EPIC FAIL, no matter what - as we see the disappointing numbers - we all see them, and it's not good.

---

Problem is, Nvidia has the MIMD multiple instructions breakthrough technology never used before that according to reports is an AWESOME advantage, lus they are moving to DDR5 with a 512 bit bus !

--

So what is in the works is an absolute WHOMPING coming down on ati that BIG GREEN NVIDIA is going to deliver, and the poor numbers here from what was hoped for and hyped over (although even PREDICTED by the red fan Derek himself in one portion of one sorrowful and despressed sentence on this site) are just one step closer to that nail in the coffin...

--

Yes I sure hope ati has something major up it's sleeve, like 512 bit mem bus increased card coming, the 5870Xmem ...

I find the speculation that ATI "mispredicted" the bandwidth needs to be utter non-sense. They are 2-3 billion in the hole from the last few years with "all these great cards" they still lose $ on every single sale, so they either cannot go higher bit width, or they don't want to, or they are hiding it for the next "strike at NVidia" release.

erple2 - Friday, September 25, 2009 - link

So you're comparing this product with a not yet release product and saying that the not yet released product is going to trounce it, without any facts to back it up? Do you have the hardware? If not, then you're simply ranting.Will the GT300 beat out the 5870? I dunno, probably. If it didn't, that would imply that the move from GT200 to GT300 was a major disappointment for NVidia.

I think that EPIC FAIL is completely ludicrous. I can see "epic fail" applied to the Geforce FX series when it came out. I can also see "epic fail" for the Radeon MAXX back in the day. But I don't see the 5870 as "epic fail". If you look at the card relative to the 4870 (the card it replaces), it's quite good - solid 30% increase. That's what I would expect from a generation improvement (that's what the gt200's did over the 9800's, and what the 8800 did over the 7900, etc).

BTW, I'm seeing the 5870 as pretty good - it beats out all single card NVidia by a reasonable and measureable amount. Sounds like ATI has done well. Or are you considering anything less than 2x the performance of the NVidia cards "epic fail"? In that case, you may be disappointed with the GT300, as well. In fact, I'll say that the GT300 is a total fail right now. I mean jeez! It scores ZERO FPS in every benchmark! That's super-epic fail. And I have the numbers to back that statement up.

Since you are making claims about the epic fail nature of the 5870 based on yet to be released hardware, I can certainly play the same game, and epic fail anything you say based on those speculative musings.

SiliconDoc - Monday, September 28, 2009 - link

Well the GT200 was 60.96% increase average. AT says so.http://www.anandtech.com/video/showdoc.aspx?i=3334...">http://www.anandtech.com/video/showdoc.aspx?i=3334...

So, I guess ati lost this round terribly, as NVidia's last just beat them by more than double your 30%.

Great, EPIC FAIL is correct, I was right, and well...

Finally - Wednesday, September 23, 2009 - link

Team Green foames out of their mouthes. It's funny to watch.SiliconDoc - Wednesday, September 23, 2009 - link

Glad you are having fun.Just let me know when you disagree, and why. I'm certain your fun will be "gone then", since reality will finally take hold, and instead of you seeing foam, I'll be seeing drool.