AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

AA Image Quality & Performance

With HL2 unsuitable for use in assessing image quality, we will be using Crysis: Warhead for the task. Warhead has a great deal of foliage in parts of the game which creates an immense amount of aliasing, and along with the geometry of local objects forms a good test for anti-aliasing quality. Look in particular at the leaves both to the left and through the windshield, along with aliasing along the frame, windows, and mirror of the vehicle. We’d also like to note that since AMD’s SSAA modes do not work in DX10, this is done in DX9 mode instead.

|

AMD Radeon HD 5870

|

AMD Radeon HD 4870

|

NVIDIA GTX 280

|

| No AA | ||

| 2X MSAA | ||

| 4X MSAA | ||

| 8X MSAA | ||

| 2X MSAA +AAA | 2X MSAA +AAA | 2X MSAA + SSTr |

| 4X MSAA +AAA | 4X MSAA +AAA | 4X MSAA + SSTr |

| 8X MSAA +AAA | 8X MSAA +AAA | 8X MSAA + SSTr |

| 2X SSAA | ||

| 4X SSAA | ||

| 8X SSAA |

From an image quality perspective, very little has changed for AMD compared to the 4890. With MSAA and AAA modes enabled the quality is virtually identical. And while things are not identical when flipping between vendors (for whatever reason the sky brightness differs), the resulting image quality is still basically the same.

For AMD, the downside to this IQ test is that SSAA fails to break away from MSAA + AAA. We’ve previously established that SSAA is a superior (albeit brute force) method of anti-aliasing, but we have been unable to find any scene in any game that succinctly proves it. Shader aliasing should be the biggest difference, but in practice we can’t find any such aliasing in a DX9 game that would be obvious. Nor is Crysis Warhead benefitting from the extra texture sampling here.

From our testing, we’re left with the impression that for a MSAA + AAA (or MSAA + SSTr for NVIDIA) is just as good as SSAA for all practical purposes. Much as with the anisotropic filtering situation we know through technological proof that there is better method, but it just isn’t making a noticeable difference here. If nothing else this is good from a performance standpoint, as MSAA + AAA is not nearly as hard on performance as outright SSAA is. Perhaps SSAA is better suited for older games, particularly those locked at lower resolutions?

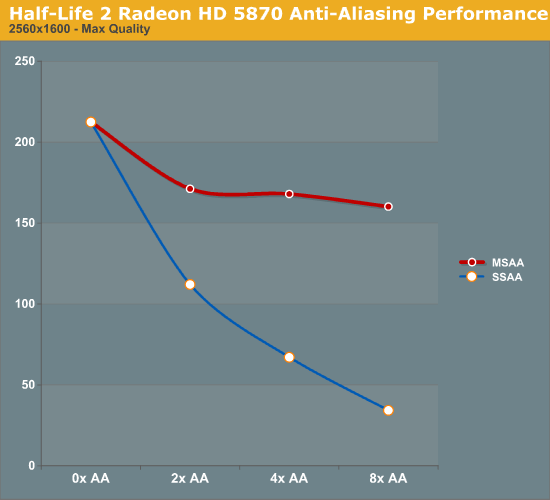

For our performance data, we have two cases. We will first look at HL2 on only the 5870, which we ran before realizing the quality problem with Source-engine games. We believe that the performance data is still correct in spite of the visual bug, and while we’re not going to use it as our only data, we will use it as an example of AA performance in an older title.

As a testament to the rendering power of the 5870, even at 2560x1600 and 8x SSAA, we still get a just-playable framerate on HL2. To put things in perspective, with 8x SSAA the game is being rendered at approximately 32MP, well over the size of even the largest possible single-card Eyefinity display.

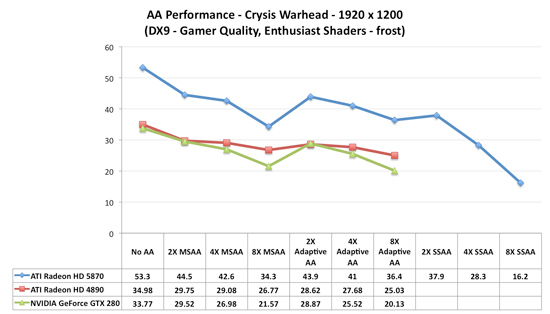

Our second, larger performance test is Crysis: Warhead. Here we are testing the game on DX9 mode again at a resolution of 1920x1200. Since this is a look at the impact of AA on various architectures, we will limit this test to the 5870, the GTX 280, and the Radeon HD 4890. Our interest here is in performance relative to no anti-aliasing, and whether different architectures lose the same amount of performance or not.

Starting with the 5870, moving from 0x AA to 4x MSAA only incurs a 20% drop in performance, while 8x MSAA increases that drop to 35%, or 80% of the 4x MSAA performance. Interestingly, in spite of the heavy foliage in the scene, Adaptive AA has virtually no performance hit over regular MSAA, coming in at virtually the same results. SSAA is of course the big loser here, quickly dropping to unplayable levels. As we discussed earlier, the quality of SSAA is no better than MSAA + AAA here.

Moving on, we have the 4890. While the overall performance is lower, interestingly enough the drop in performance from MSAA is not quite as much, at only 17% for 4x MSAA and 25% for 8x MSAA. This makes the performance of 8x MSAA relative to 4x MSAA 92%. Once again the performance hit from enabling AAA is miniscule, at roughly 1 FPS.

Finally we have the GTX 280. The drop in performance here is in line with that of the 5870; 20% for 4x MSAA, 36% for 8x MSAA, with 8x MSAA offering 80% of the performance. Even enabling supersample transparency AA only knocks off 1 FPS, just like AAA under the 5870.

What this leaves us with are very curious results. On a percentage basis the 5870 is no better than the GTX 280, which isn’t an irrational thing to see, but it does worse than the 4890. At this point we don’t have a good explanation for the difference; perhaps it’s a product of early drivers or the early BIOS? It’s something that we’ll need to investigate at a later date.

Wrapping things up, as we discussed earlier AMD has been pitching the idea of better 8x MSAA performance in the 5870 compared to the 4800 series due to the extra cache. Although from a practical perspective we’re not sold on the idea that 8x MSAA is a big enough improvement to justify any performance hit, we can put to rest the idea that the 5870 is any better at 8x MSAA than prior cards. At least in Crysis: Warhead, we’re not seeing it.

327 Comments

View All Comments

Xajel - Wednesday, September 23, 2009 - link

Ryan,I've send a detailed solution for this problem to you ( Aero disabled when running and video with UVD accerelation ), but the basic for all readers here is just install HydraVision & Avivo Video Convertor packages, and this should fix the problem...

Ryan Smith - Wednesday, September 23, 2009 - link

Just so we're clear, Basic mode is only being triggered when HDCP is being used to protect the content. It is not being triggered by just using the UVD with regular/unprotected content.biigfoot - Wednesday, September 23, 2009 - link

Well, I've been looking forward to reading your review of this new card for a little while now, especially since the realistic sounding specs leaked out a week or so ago. My first honest impression is that it looks like it'll be a little bit longer till the drivers mature and the game developers figure out some creative ways to bring the processor up to its full potential, but in the mean time, it looks like I'd have to agree with everyone's conclusion that even 150+GB/s isn't enough memory bandwidth for the beast, I'm sure it was a calculated compromise while the design was still on the drawing board. Unfortunately, increasing the bus isn't as easy as stapling on a couple more memory controllers, they probably would've had to resort back to a wider ring bus and they've already been down that road. (R600 anyone) Already knowing how much more die area and power would've been required to execute such a design probably made the decision rather easy to stick with the tested 4 channel GDDR5 setup that worked so well for the RV770 and RV790. As for how much a 6 or 8 channel (384/512 bit) memory controller setup would've improved performance, we'll probably never know; as awesome as it would be, I don't foresee BoFox's idea of ATI pulling a fast one on nVidia and the rest of us by releasing a 512-bit derivative in short order, but crazier things have happened.biigfoot - Wednesday, September 23, 2009 - link

Oh, I also noticed, they must of known that the new memory controller topology wasn't going to cut it all the time judging from all the cache augmentations performed. But like i said, given time, I'm sure they'll optimize the drivers to take advantage of all the new functionality, I'm betting that 90% of the low level functions are still handled identically as they were in the last generations architecture and it will take a while till all the hardware optimizations like the cache upgrades are fully realized.piroroadkill - Wednesday, September 23, 2009 - link

The single most disappointing thing about this card is the noise and heat.Sapphire have been coming out with some great coolers recently, in their VAPOR-X line. Why doesn't ATI stop using the same dustbuster cooler in a new shiny cover, and create a much, much quieter cooler, so the rest of us don't have to wait for a million OEM variations until there's one with a good cooler (or fuck about and swap the cooler ourselves, but unless you have one that covers the GPU AND the RAM chips, forget it. Those pathetic little sticky pad RAM sinks suck total donkey balls.)

Kaleid - Wednesday, September 23, 2009 - link

Already showing up...http://www.techpowerup.com/104447/Sapphire_HD_5870...">http://www.techpowerup.com/104447/Sapphire_HD_5870...

It will be possible to cool the card quietly...

Dante80 - Wednesday, September 23, 2009 - link

the answer you are looking for is simple. Another, more elaborate cooler would raise prices more, and vendors don't like that. Remember what happened to the more expensive stock cooler for the 4770? ...;)Cookie Monster - Wednesday, September 23, 2009 - link

When running dual/multi monitors with past generation cards, the cards would always run at full 3d clocks or else face instabilities, screen corruptions etc. So even if the cards are at idle, it would never ramp down to the 2d clocks to save power, rending impressive low idle power consumption numbers useless (especially on the GTX200 series cards).Now with RV870 has this problem been fixed?

Ryan Smith - Wednesday, September 23, 2009 - link

That's a good question, and something we didn't test. Unfortunately we're at IDF right now, so it's not something we can test at this moment, either.Cookie Monster - Wednesday, September 23, 2009 - link

It would be awesome if you guys do get some free time to test it out. Would be really appreciated! :)