AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

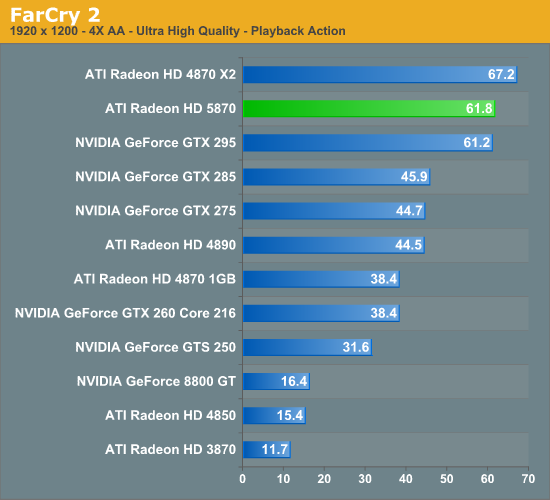

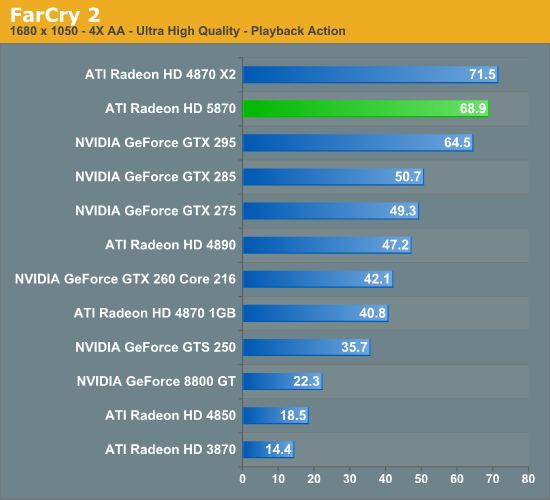

Far Cry 2

Far Cry 2 is another foliage-heavy game. Thankfully it’s not nearly as punishing as Crysis, and it’s possible to achieve a good framerate even with all the settings at their highest.

Unlike Crysis, we have a few interesting things going on here for the 5870. First, it manages to just beat out the GTX 295. The second is that it manages to lose to its other multi-GPU competitor, the 4870X2. Among its other single-GPU competitors however it’s no contest, with the 5870 beating the GTX 285 by over 40%.

Meanwhile the 5870 CF once again comes out on top of it all. This is going to be a recurring pattern, folks.

327 Comments

View All Comments

poohbear - Wednesday, September 23, 2009 - link

is it just me or is anyone else disappointed? next gen cards used to double the performance of previous gen cards, this card beats em by a measly 30-40%. *sigh* times change i guess.AznBoi36 - Wednesday, September 23, 2009 - link

It's just you.The next generations never doubled in performance. Rather they offered a bump in framerates (15-40%) along with better texture filtering, AA, AF etc...

I'd rather my games look AMAZING at 60fps rather than crappy graphics at 100fps.

SiliconDoc - Monday, September 28, 2009 - link

Golly, another red rooster lie, they just NEVER stop.Let's take it right from this site, so your whining about it being nv zone or fudzilla or whatever shows ati is a failure in the very terms claimed is not your next, dishonest move.

---

NVIDIA w/ GT200 spanks their prior generation by 60.96% !

That's nearly 61% average increase at HIGHEST RESOLUTION and HIGHEST AA AF settings, and it right here @ AT - LOL -

- and they matched the clock settings JUST TO BE OVERTLY UNFAIR ! ROFLMAO AND NVIDIA'S NEXT GEN LEAP STILL BEAT THE CRAP OUT OF THIS LOUSY ati 5870 EPIC FAIL !

http://www.anandtech.com/video/showdoc.aspx?i=3334...">http://www.anandtech.com/video/showdoc.aspx?i=3334...

--

roflmao - that 426.70/7 = 60.96 % INCREASE FROM THE LAST GEN AT THE SAME SPEEDS, MATCHED FOR MAKING CERTAIN IT WOULD BE AS LOW AS POSSIBLE ! ROFLMAO NICE TRY BUT NVIDIA KICKED BUTT !

---

Sorry, the "usual" is not 15-30% - lol

---

NVIDIA's last usual was !!!!!!!!!!!! 60.69% INCREASE AT HIGHEST SETTINGS !

-

Now, once again, please, no lying.

piroroadkill - Wednesday, September 23, 2009 - link

No, it's definitely just youGriswold - Wednesday, September 23, 2009 - link

Its just you. Go buy a clue.ET - Wednesday, September 23, 2009 - link

Should probably be removed...Nice article. The 5870 doesn't really impress. It's the price of two 4890 cards, so for rendering power that's probably the way to go. I'll be looking forward to the 5850 reviews.

Zingam - Wednesday, September 23, 2009 - link

Good but as seen it doesn't play Crysis once again... :DWe shall wait for 8Gb RAM DDR 7, 16 nm Graphics card to play this damned game!

BoFox - Wednesday, September 23, 2009 - link

Great article!Re: Shader Aliasing nowhere to be found in DX9 games--

Shader aliasing is present all over the Unreal3 engine games (UT3, Bioshock, Batman, R6:Vegas, Mass Effect, etc..). I can imagine where SSAA would be extremely useful in those games.

Also, I cannot help but wonder if SSAA would work in games that use deferred shading instead of allowing MSAA to work (examples: Dead Space, STALKER, Wanted, Bionic Commando, etc..), if ATI would implement brute-force SSAA support in the drivers for those games in particular.

I am amazed at the perfectly circular AF method, but would have liked to see 32x AF in addition. With 32x AF, we'd probably be seeing more of a difference. If we're awed by seeing 16x AA or 24x CFAA, then why not 32x AF also (given that the increase from 8 to 16x AF only costs like 1% performance hit)?

Why did ATI make the card so long? It's even longer than a GTX 295 or a 4870X2. I am completely baffled at this. It only has 8 memory chips, uses a 256-bit bus, unlike a more complex 512-bit bus and 16 chips found on a much, much shorter HD2900XT. There seems to be so much space wasted on the end of the PCB. Perhaps some of the vendors will develop non-reference PCB's that are a couple inches shorter real soon. It could be that ATI rushed out the design (hence the extremely long PCB draft design), or that ATI deliberately did this to allow 3rd-party vendors to make far more attractive designs that will keep us interested in the 5870 right around the time of GT300 release.

Regarding the memory bandwidth bottleneck, I completely agree with you that it certainly seems to be a severe bottleneck (although not too severe that it only performs 33% better than a HD4890). A 5870 has exactly 2x the specifications of a 4890, yet it generally performs slower than a 4870X2, let alone dual-4890 in Xfire. A 4870 is slower than a 4890 to begin with, and is dependent on Crossfire.

Overall, ATI is correct in saying that a 5870 is generally 60% faster than a 4870 in current games, but theoretically, a 5870 should be exactly 100% faster than a 4890. Only if ATI could have used 512-bit memory bandwidth with GDDR5 chips (even if it requires the use of a 1024-bit ringbus) would the total memory bandwidth be doubled. The performance would have been at least as good as two 4890's in crossfire, and also at least as good as a GTX295.

I am guessing that ATI wants to roll out the 5870X2 as soon as possible and realized that doing it with a 512-bit bus would take up too much time/resources/cost, etc.. and that it's better to just beat NV to the punch a few months in advance. Perhaps ATI will do a 5970 card with 512-bit memory a few months after a 5870X2 is released, to give GT300 cards a run for its money? Perhaps it is to "pacify" Nvidia's strategy with its upcoming next-gen that carry great promises with a completely revamped architecture and 512 shaders, so that NV does not see the need to make its GT300 exceed the 5870 by far too much? Then ATI would be able to counter right afterwards without having to resort to making a much bigger chip?

Speculation.. speculation...

Lakku - Wednesday, September 23, 2009 - link

Read some of the other 5780 articles that cover SSAA image quality. It actually makes most modern games look worse, but that is through no fault of ATi, just the nature of the SS method that literally AA's everything, and in the process, can/does blur textures.strikeback03 - Wednesday, September 23, 2009 - link

I don't know much about video games, but in photography it is known that reducing the size of an image reduces the appearance of sharpness as well, so final sharpening should be done at the output size.