AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Eyefinity

Somewhere around 2006 - 2007 ATI was working on the overall specifications for what would eventually turn into the RV870 GPU. These GPUs are designed by combining the views of ATI's engineers with the demands of the developers, end-users and OEMs. In the case of Eyefinity, the initial demand came directly from the OEMs.

ATI was working on the mobile version of its RV870 architecture and realized that it had a number of DisplayPort (DP) outputs at the request of OEMs. The OEMs wanted up to six DP outputs from the GPU, but with only two active at a time. The six came from two for internal panel use (if an OEM wanted to do a dual-monitor notebook, which has happened since), two for external outputs (one DP and one DVI/VGA/HDMI for example), and two for passing through to a docking station. Again, only two had to be active at once so the GPU only had six sets of DP lanes but the display engines to drive two simultaneously.

ATI looked at the effort required to enable all six outputs at the same time and made it so, thus the RV870 GPU can output to a maximum of six displays at the same time. Not all cards support this as you first need to have the requisite number of display outputs on the card itself. The standard Radeon HD 5870 can drive three outputs simultaneously: any combination of the DVI and HDMI ports for up to 2 monitors, and a DisplayPort output independent of DVI/HDMI. Later this year you'll see a version of the card with six mini-DisplayPort outputs for driving six monitors.

It's not just hardware, there's a software component as well. The Radeon HD 5000 series driver allows you to combine all of these display outputs into one single large surface, visible to Windows and your games as a single display with tremendous resolution.

I set up a group of three Dell 24" displays (U2410s). This isn't exactly what Eyefinity was designed for since each display costs $600, but the point is that you could group three $200 1920 x 1080 panels together and potentially have a more immersive gaming experience (for less money) than a single 30" panel.

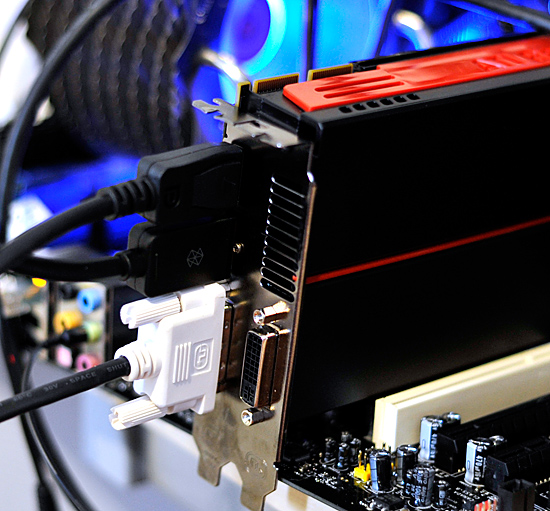

For our Eyefinity tests I chose to use every single type of output on the card, that's one DVI, one HDMI and one DisplayPort:

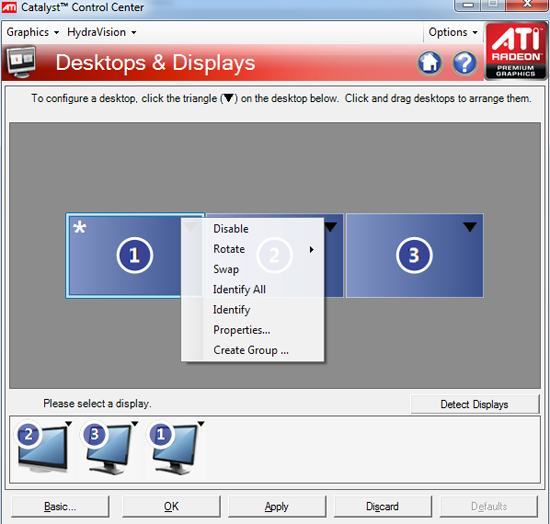

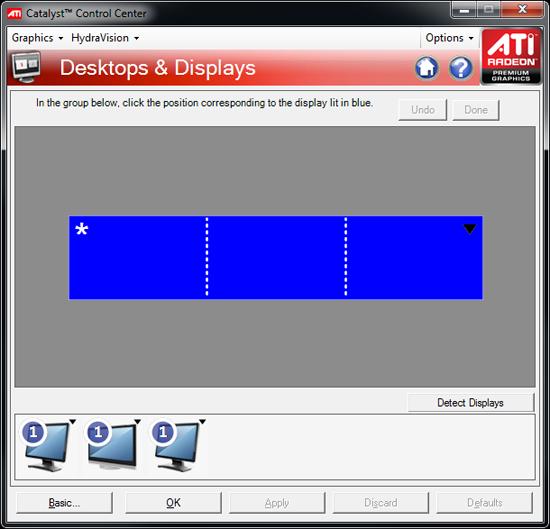

With all three outputs connected, Windows defaults to cloning the display across all monitors. Going into ATI's Catalyst Control Center lets you configure your Eyefinity groups:

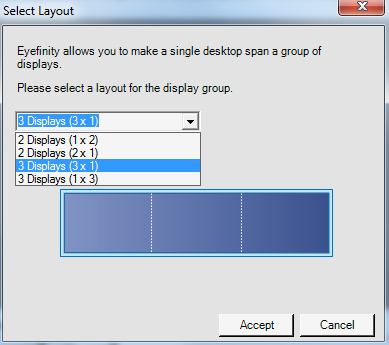

With three displays connected I could create a single 1x3 or 3x1 arrangement of displays. I also had the ability to rotate the displays first so they were in portrait mode.

You can create smaller groups, although the ability to do so disappeared after I created my first Eyefinity setup (even after deleting it and trying to recreate it). Once you've selected the type of Eyefinity display you'd like to create, the driver will make a guess as to the arrangement of your panels.

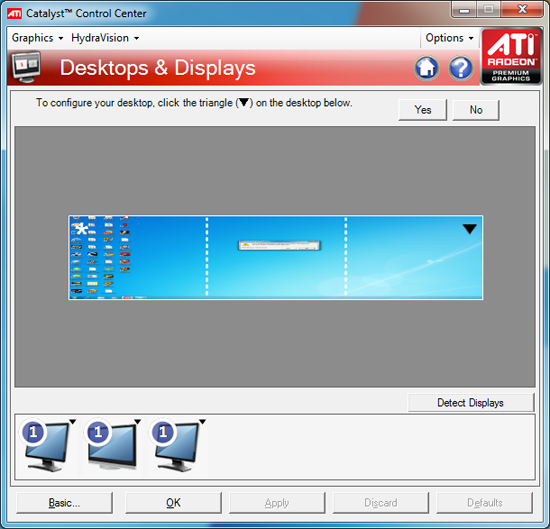

If it guessed correctly, just click Yes and you're good to go. Otherwise ATI has a handy way of determining the location of your monitors:

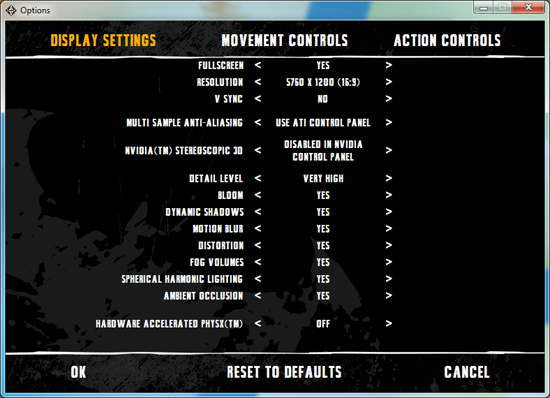

With the software side taken care of, you now have a Single Large Surface as ATI likes to call it. The display appears as one contiguous panel with a ridiculous resolution to the OS and all applications/games:

Three 24" panels in a row give us 5760 x 1200

The screenshot above should clue you into the first problem with an Eyefinity setup: aspect ratio. While the Windows desktop simply expands to provide you with more screen real estate, some games may not increase how much you can see - they may just stretch the viewport to fill all of the horizontal resolution. The resolution is correctly listed in Batman Arkham Asylum, but the aspect ratio is not (5760:1200 !~ 16:9). In these situations my Eyefinity setup made me feel downright sick; the weird stretching of characters as they moved towards the outer edges of my vision left me feeling ill.

Dispite Oblivion's support for ultra wide aspect ratio gaming, by default the game stretches to occupy all horizontal resolution

Other games have their own quirks. Resident Evil 5 correctly identified the resolution but appeared to maintain a 16:9 aspect ratio without stretching. In other words, while my display was only 1200 pixels high, the game rendered as if it were 3240 pixels high and only fit what it could onto my screens. This resulted in unusable menus and a game that wasn't actually playable once you got into it.

Games with pre-rendered cutscenes generally don't mesh well with Eyefinity either. In fact, anything that's not rendered on the fly tends to only occupy the middle portion of the screens. Game menus are a perfect example of this:

There are other issues with Eyefinity that go beyond just properly taking advantage of the resolution. While the three-monitor setup pictured above is great for games, it's not ideal in Windows. You'd want your main screen to be the one in the center, however since it's a single large display your start menu would actually appear on the leftmost panel. The same applies to games that have a HUD located in the lower left or lower right corners of the display. In Oblivion your health, magic and endurance bars all appear in the lower left, which in the case above means that the far left corner of the left panel is where you have to look for your vitals. Given that each panel is nearly two feet wide, that's a pretty far distance to look.

The biggest issue that everyone worried about was bezel thickness hurting the experience. To be honest, bezel thickness was only an issue for me when I oriented the monitors in portrait mode. Sitting close to an array of wide enough panels, the bezel thickness isn't that big of a deal. Which brings me to the next point: immersion.

The game that sold me on Eyefinity was actually one that I don't play: World of Warcraft. The game handled the ultra wide resolution perfectly, it didn't stretch any content, it just expanded my viewport. With the left and right displays tilted inwards slightly, WoW was more immersive. It's not so much that I could see what was going on around me, but that whenever I moved forward I I had the game world in more of my peripheral vision than I usually do. Running through a field felt more like running through a field, since there was more field in my vision. It's the only example where I actually felt like this was the first step towards the holy grail of creating the Holodeck. The effect was pretty impressive, although costly given that I only really attained it in a single game.

Before using Eyefinity for myself I thought I would hate the bezel thickness of the Dell U2410 monitors and I felt that the experience wouldn't be any more engaging. I was wrong on both counts, but I was also wrong to assume that all games would just work perfectly. Out of the four that I tried, only WoW worked flawlessly - the rest either had issues rendering at the unusually wide resolution or simply stretched the content and didn't give me as much additional viewspace to really make the feature useful. Will this all change given that in six months ATI's entire graphics lineup will support three displays? I'd say that's more than likely. The last company to attempt something similar was Matrox and it unfortunately didn't have the graphics horsepower to back it up.

The Radeon HD 5870 itself is fast enough to render many games at 5760 x 1200 even at full detail settings. I managed 48 fps in World of Warcraft and a staggering 66 fps in Batman Arkham Asylum without AA enabled. It's absolutely playable.

327 Comments

View All Comments

Agentbolt - Wednesday, September 23, 2009 - link

Informative and well-written. My main question was "how future-proof is it?" I got the Radeon 9700 for DirectX9, the 8800GTS for DirectX10, and it looks like I may very well be picking this up for DirectX11. It's nice there's usually one card you can pick up early that'll run games for years to come at acceptable levels.kumquatsrus - Wednesday, September 23, 2009 - link

great article and great card btw. just wanted to point out that the gtx 285 also had 2x6 pins only required, i believe.Ryan Smith - Wednesday, September 23, 2009 - link

That's correct. I'm not sure how "275" ended up in there.SiliconDoc - Wednesday, September 23, 2009 - link

One wonders how the 8800GT ended up on the Temp/Heat comparison, until you READ the text, and it claims heat is "all over the place", then the very next line is "ALL the Ati's are up @~around 90C" .Yes, so temp is NOT alkl over the place, it's only VERY HIGH for ALL the ATI cards... and NVIDIA cards are not all very high...

-so it becomes CLEAR the 8800GT was included ONLY so the article could whine it was at 92C, since the 275 is @ 75C and the 260 is low the 285 is low, etc., NVidia WINS HANDS DOWN the temperature game...... buit the article just couldn't bring itself to be HONEST about that.

---

What a shame. Deception, the name of the game.

Ryan Smith - Wednesday, September 23, 2009 - link

The 8800GT, as was the 3870, was included to offer a snapshot of an older value product in our comparisons. The 8800GT in particular was a very popular card, and there are still a lot of people out there using them. Including such cards provides a frame of reference for performance for people using such cards.SiliconDoc - Wednesday, September 23, 2009 - link

Gee I cannot imagine load temps for the 4980 and 4870x2 exist anywhere else on this site along with the 260,275, and 285... can you ?Oh, how about I look...

Finally - Wednesday, September 23, 2009 - link

Nvidida-Trolls tend to turn green when feeling inferior.SiliconDoc - Wednesday, September 23, 2009 - link

Turning green was something the 40nm 5870 was supposed to do wasn't it ?Instead it turned into another 3D HEAT MONSTER, like all the ati cards.

Take a look at the power charts, then look at that "wonderful tiny ATI die size that makes em so much money!" (as they lose a billion plus a year), and then calculate that power into that tiny core, NOT minusing failure for framerates hence "less data", since of course ati cards are "faster" right ?

So you've got more power in a smaller footprint core...

HENCE THE 90 DEGREE CELCIUS RUNNING RATES, AND BEYOND.

---

Yeah, so sorry that it's easier for you to call names than think.

RubberJohnny - Wednesday, September 23, 2009 - link

LOL...replying to your own post 3 times...gettin all worked up about temps...PUTTIN STUFF IN CAPS...Looks like this fan boy just can't accept that the 5890 is a great card. Not surprising really, these reviews always seem to bring the fanboys/trolls/whackos out of the woodwork.

Once again, good job AT!!!

JarredWalton - Thursday, September 24, 2009 - link

SiliconDoc, you should try thinking instead of trolling. Why would the maximum be around 90C? Because that's what the cards are designed to target under load. If they get hotter, the fan speeds would ramp up a bit more. There's no need to run fans at high rates to cool down hardware if the hardware functions properly.Reviewing based on max temperatures is a stupid idea when other factors come into play, which is why one page has power draws, temperatures, and noise levels. The GTX 295 has the same temperature not because it's "as hot" but because the fan kicked up to a faster speed to keep that level of heat.

The only thing you can really conclude is that slower GPUs generate less heat and thus don't need to increase fan speeds. The 275 gets hotter than the 285 as well by 10C, but since the 285 is 11.3 dB louder I wouldn't call it better by any stretch. It's just "different".