AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

DirectX11 Redux

With the launch of the 5800 series, AMD is quite proud of the position they’re in. They have a DX11 card launching a month before DX11 is dropped on to consumers in the form of Win7, and the slower timing of NVIDIA means that AMD has had silicon ready far sooner. This puts AMD in the position of Cypress being the de facto hardware implementation of DX11, a situation that is helpful for the company in the long term as game development will need to begin on solely their hardware (and programmed against AMD’s advantages and quirks) until such a time that NVIDIA’s hardware is ready. This is not a position that AMD has enjoyed since 2002 with the Radeon 9700 and DirectX 9.0, as DirectX 10 was anchored by NVIDIA due in large part to AMD’s late hardware.

As we have already covered DirectX 11 in-depth with our first look at the standard nearly a year ago, this is going to be a recap of what DX11 is bringing to the table. If you’d like to get the entire inside story, please see our in-depth DirectX 11 article.

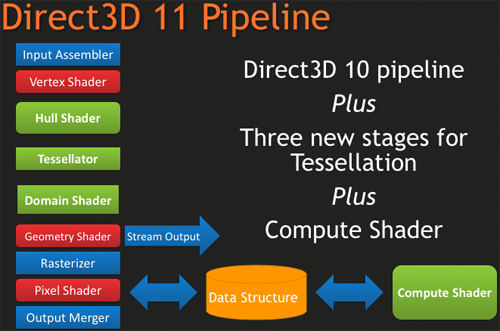

DirectX 11, as we have previously mentioned, is a pure superset of DirectX 10. Rather than being the massive overhaul of DirectX that DX10 was compared to DX9, DX11 builds off of DX10 without throwing away the old ways. The result of this is easy to see in the hardware of the 5870, where as features were added to the Direct3D pipeline, they were added to the RV770 pipeline in its transformation into Cypress.

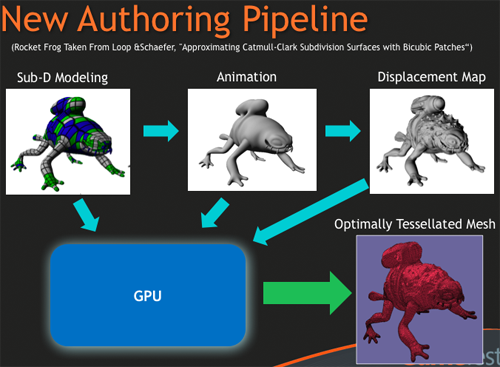

New to the Direct3D pipeline for DirectX 11 is the tessellation system, which is divided up into 3 parts, and the Computer Shader. Starting at the very top of the tessellation stack, we have the Hull Shader. The Hull Shader is responsible for taking in patches and control points (tessellation directions), to prepare a piece of geometry to be tessellated.

Next up is the tesselator proper, which is a rather significant piece of fixed function hardware. The tesselator’s sole job is to take geometry and to break it up into more complex portions, in effect creating additional geometric detail from where there was none. As setting up geometry at the start of the graphics pipeline is comparatively expensive, this is a very cool hack to get more geometric detail out of an object without the need to fully deal with what amounts to “eye candy” polygons.

As the tesselator is not programmable, it simply tessellates whatever it is fed. This is what makes the Hull Shader so important, as it’s serves as the programmable input side of the tesselator.

Once the tesselator is done, it hands its work off to the Domain Shader, along with the Hull Shader handing off its original inputs to the Domain Shader too. The Domain Shader is responsible for any further manipulations of the tessellated data that need to be made such as applying displacement maps, before passing it along to other parts of the GPU.

The tesselator is very much AMD’s baby in DX11. They’ve been playing with tesselators as early as 2001, only for them to never gain traction on the PC. The tesselator has seen use in the Xbox 360 where the AMD-designed Xenos GPU has one (albeit much simpler than DX11’s), but when that same tesselator was brought over and put in the R600 and successive hardware, it was never used since it was not a part of the DirectX standard. Now that tessellation is finally part of that standard, we should expect to see it picked up and used by a large number of developers. For AMD, it’s vindication for all the work they’ve put into tessellation over the years.

The other big addition to the Direct3D pipeline is the Compute Shader, which allows for programs to access the hardware of a GPU and treat it like a regular data processor rather than a graphical rendering processor. The Compute Shader is open for use by games and non-games alike, although when it’s used outside of the Direct3D pipeline it’s usually referred to as DirectCompute rather than the Compute Shader.

For its use in games, the big thing AMD is pushing right now is Order Independent Transparency, which uses the Compute Shader to sort transparent textures in a single pass so that they are rendered in the correct order. This isn’t something that was previously impossible using other methods (e.g. pixel shaders), but using the Compute Shader is much faster.

Other features finding their way into Direct3D include some significant changes for textures, in the name of improving image quality. Texture sizes are being bumped up to 16K x 16K (that’s a 256MP texture) which for all practical purposes means that textures can be of an unlimited size given that you’ll run out of video memory before being able to utilize such a large texture.

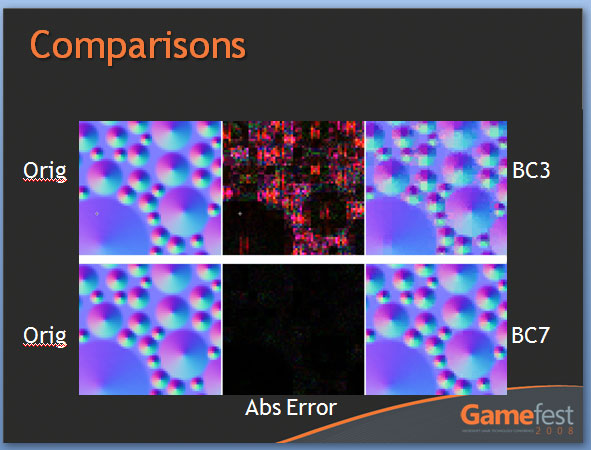

The other change to textures is the addition of two new texture compression schemes, BC6H and BC7. These new texture compression schemes are another one of AMD’s pet projects, as they are the ones to develop them and push for their inclusion in DX11. BC6H is the first texture compression method dedicated for use in compressing HDR textures, which previously compressed very poorly using even less-lossy schemes like BC3/DXT5. It can compress textures at a lossy 6:1 ratio. Meanwhile BC7 is for use with regular textures, and is billed as a replacement for BC3/DXT5. It has the same 3:1 compression ratio for RGB textures.

We’re actually rather excited about these new texture compression schemes, as better ways to compress textures directly leads to better texture quality. Compressing HDR textures allows for larger/better textures due to the space saved, and using BC7 in place of BC3 is an outright quality improvement in the same amount of space, given an appropriate texture. Better compression and tessellation stand to be the biggest benefactors towards improving the base image quality of games by leading to better textures and better geometry.

We had been hoping to supply some examples of these new texture compression methods in action with real textures, but we have not been able to secure the necessary samples in time. In the meantime we have Microsoft’s examples from GameFest 2008, which drive the point home well enough in spite of being synthetic.

Moving beyond the Direct3D pipeline, the next big feature coming in DirectX 11 is better support for multithreading. By allowing multiple threads to simultaneously create resources, manage states, and issue draw commands, it will no longer be necessary to have a single thread do all of this heavy lifting. As this is an optimization focused on better utilizing the CPU, it stands that graphics performance in GPU-limited situations stands to gain little. Rather this is going to help the CPU in CPU-limited situations better utilize the graphics hardware. Technically this feature does not require DX11 hardware support (it’s a high-level construct available for use with DX10/10.1 cards too) but it’s still a significant technology being introduced with DX11.

Last but not least, DX11 is bringing with it High Level Shader Language 5.0, which in turn is bringing several new instructions that are primarily focused on speeding up common tasks, and some new features that make it more C-like. Classes and interfaces will make an appearance here, which will make shader code development easier by allowing for easier segmentation of code. This will go hand-in-hand with dynamic shader linkage, which helps to clean up code by only linking in shader code suitable for the target device, taking the management of that task out of the hands of the coder.

327 Comments

View All Comments

SiliconDoc - Thursday, September 24, 2009 - link

Are you seriously going to claim that all ATI are not generally hotter than the nvidia cards ? I don't think you really want to do that, no matter how much you wail about fan speeds.The numbers have been here for a long time and they are all over the net.

When you have a smaller die cranking out the same framerate/video, there is simply no getting around it.

You talked about the 295, as it really is the only nvidia that compares to the ati card in this review in terms of load temp, PERIOD.

In any other sense, the GT8800 would be laughed off the pages comparing it to the 5870.

Furthermore, one merely needs to look at the WATTAGE of the cards, and that is more than a plenty accurate measuring stick for heat on load, divided by surface area of the core.

No, I'm not the one not thinking, I'm not the one TROLLING, the TROLLING is in the ARTICLE, and the YEAR plus of covering up LIES we've had concerning this very issue.

Nvidia cards run cooler, ati cards run hotter, PERIOD.

You people want it in every direction, with every lying whine for your red god, so pick one or the other:

1.The core sizes are equivalent, or 2. the giant expensive dies of nvidia run cooler compared to the "efficient" "new technology" "packing the data in" smaller, tiny, cheap, profit margin producing ATI cores.

------

NOW, it doesn't matter what lies or spin you place upon the facts, the truth is absolutely apparent, and you WON'T be changing the physical laws of the universe with your whining spin for ati, and neither will the trolling in the article. I'm going to stick my head in the sand and SCREAM LOUDLY because I CAN'T HANDLE anyone with a lick of intelligence NOT AGREEING WITH ME! I LOVE TO LIE AND TYPE IN CAPS BECAUSE THAT'S HOW WE ROLL IN ILLINOIS!

SiliconDoc - Friday, September 25, 2009 - link

Well that is amazing, now a mod or site master has edited my text.Wow.

erple2 - Friday, September 25, 2009 - link

This just gets better and better...Ultimately, the true measure of how much waste heat a card generates will have to look at the power draw of the card, tempered with the output work that it's doing (aka FPS in whatever benchmark you're looking at). Since I haven't seen that kind of comparison, it's impossible to say anything at all about the relative heat output of any card. So your conclusions are simply biased towards what you think is important (and that should be abundantly clear).

Given that one must look at the performance per watt. Since the only wattage figures we have are for OCCT or WoW playing, so that's all the conclusions one can make from this article. Since I didn't see the results from the OCCT test (in a nice, convenient FPS measure), we get the following:

5870: 73 fps at 295 watts = 247 FPS per milliwatt

275: 44.3 fps at 317 watts = 140 FPS per milliwatt

285: 45.7 fps at 323 watts = 137 FPS per milliwatt

295: 68.9 fps at 380 watts = 181 FPS per milliwatt

That means that the 5870 wins by at least 36% over the other 3 cards. That means that for this observation, the 5870 is, in fact, the most efficient of these cards. It therefore generates less heat than the other 3 cards. Looking at the temperatures of the cards, that strictly measures the efficiency of the cooler, not the efficiency of the actual card itself.

You can say that you think that I'm biased, but ultimately, that's the data I have to go on, and therefore that's the conclusions that can be made. Unfortunately, there's nothing in your post (or more or less all of your posts) that can be verified by any of the information gleaned from the article, and therefore, your conclusions are simply biased speculation.

SiliconDoc - Saturday, September 26, 2009 - link

4780, 55nm, 256mm die, 150watts HOTG260, 55nm, 576mm die, 171watts COLD

3870, 55nm, 192mm die, 106watts HOT

That's all the further I should have to go.

3870 has THE LOWEST LOAD POWER USEAGE ON THE CHARTS

- but it is still 90C, at the very peak of heat,

because it has THE TINIEST CORE !

THE SMALLEST CORE IN THE WHOLE DANG BEJEEBER ARTICLE !

It also has the lowest framerate - so there goes that erple theory.

---

The anomlies you will notice if you look, are due to nm size, memory amount on board (less electricity used by the memory means the core used more), and one slot vs two slot coolers, as examples, but the basic laws of physics cannot be thrown out the window because you feel like doing it, nor can idiotic ideas like framerate come close to predicting core temp and it's heat density at load.

Older cpu's may have horrible framerates and horribly high temps, for instance. The 4850 frames do not equal the 4870's, but their core temp/heat density envelope is very close to indentical ( SAME CORE SIZE > the 4850 having some die shaders disabled and ddr3, the 4870 with ddr5 full core active more watts for mem and shaders, but the same PHYSICAL ISSUES - small core, high wattage for area, high heat)

erple2 - Tuesday, September 29, 2009 - link

I didn't say that the 3870 was the most efficient card. I was talking about the 5870. If you actually read what I had typed, I did mention that you have to look at how much work the card is doing while consuming that amount of power, not just temperatures and wattage.You sir, are a Nazi.

Actually, once you start talking about heat density at load, you MUST look at the efficiency of the card at converting electricity into whatever it's supposed to be doing (other than heating your office). Sadly, the only real way that we have to abstractly measure the work the card is doing is "FPS". I'm not saying that FPS predict core temperature.

SiliconDoc - Wednesday, September 30, 2009 - link

No, the efficiency of conversion you talk about has NOTHING to do with core temp AT ALL. The card could be massively efficient or inefficient at produced framerate, or just ERROR OUT with a sick loop in the core, and THAT HAS ABSOLUTELY NOTHING TO DO WITH THE CORE TEMP. IT RESTS ON WATTS CONSUMED EVEN IF FRAMERATE OUTPUT IS ZERO OR 300SECOND.(your mind seems to have imagined that if the red god is slinging massive frames "out the dvi port" a giant surge of electricity flows through it to the monitor, and therefore "does not heat the card")

I suggest you examine that lunatic red notion.

What YOU must look at is a red rooster rooter rimshot, in order that your self deception and massive mistake and face saving is in place, for you. At least JaredWalton had the sense to quietly skitter away.

Well, being wrong forever and never realizing a thing is perhaps the worst road to take.

PS - Being correct and making sure the truth is defended has nothing to do with some REDEYE cleche, and I certainly doubt the Gregalouge would embrace red rooster canada card bottom line crumbled for years ever more in a row, and diss big green corporate profits, as we both obviously know.

" at converting electricity into whatever it's supposed to be doing (other than heating your office). "

ONCE IT CONVERTS ELECTRICITY, AS IN "SHOWS IT USED MORE WATTS" it doesn't matter one ding dang smidgen what framerate is,

it could loop sand in the core and give you NO screeen output,

and it would still heat up while it "sat on it's lazy", tarding upon itself.

The card does not POWER the monitor and have the monitor carry more and more of the heat burden if the GPU sends out some sizzly framerates and the "non-used up watts" don't go sailing out the cards connector to the monitor so that "heat generation winds up somewhere else".

When the programmers optimize a DRIVER, and the same GPU core suddenly sends out 5 more fps everything else being the same, it may or may not increase or decrease POWER USEAGE. It can go ANY WAY. Up, down, or stay the same.

If they code in more proper "buffer fills" so the core is hammered solid, instead of flakey filling, the framerate goes up - and so does the temp!

If they optimize for instance, an algorythm that better predicts what does not need to be drawn as it rests behind another image on top of it, framerate goes up, while temp and wattage used GOES DOWN.

---

Even with all of that, THERE IS ONLY ONE PLACE FOR THE HEAT TO ARISE... AND IT AIN'T OUT THE DANG CABLE TO THE MONITOR!

SiliconDoc - Friday, September 25, 2009 - link

You can modify that, or be more accurate, by using core mass, (including thickness of the competing dies) - since the core mass is what consumes the electricity, and generates heat. A smaller mass (or die size, almost exclusively referred to in terms of surface area with the assumption that thickness is identical or near so) winds up getting hotter in terms of degrees of Celcius when consuming a similar amount of electricity.Doesn't matter if one frame, none, or a thousand reach your eyes on the monitor.

That's reality, not hokum. That's why ATI cores run hotter, they are smaller and consume a similar amount of electricty, that winds up as heat in a smaller mass, that means hotter.

Also, in actuality, the ATI heatsinks in a general sense, have to be able to dissipate more heat with less surface area as a transfer medium, to maintain the same core temps as the larger nvidia cores and HS areas, so indeed, should actually be "better stock" fans and HS.

I suspect they are slightly better as a general rule, but fail to excel enough to bring core load temps to nvidia general levels.

erple2 - Friday, September 25, 2009 - link

You understand that if there were no heatsink/cooling device on a GPU, it would heat up to crazy levels, far more than would be "healthy" for any silicon part, right? And you understand that measuring the efficiency of a part involves a pretty strong correlation between the input power draw of the card vs. the work that the card produces (which we can really only measure based on the output of the card, namely FPS), right?So I'm not sure that your argument means anything at all?

Curiously, the output wattage listed is for the entire system, not just for the card. Which means that the actual differences between the ATI cards vs. the nvidia cards is even larger (as a percentage, at least). I don't know what the "baseline" power consumption of the system (sans video card) is for the system acting as the test bed is.

Ultimately, the amount of electricity running through the GPU doesn't necessarily tell you how much heat the processors generate. It's dependent on how much of that power is "wasted" as heat energy (that's Thermodynamics for you). The only way to really measure the heat production of the GPU is to determine how much power is "wasted" as heat. Curiously, you can't measure that by measuring the temperature of the GPU. Well, you CAN, but you'd have to remove the Heatsink (and Fan). Which, for ANY GPU made in the last 15 years, would cook it. Since that's not a viable alternative, you simply can't make broad conclusions about which chip is "hotter" than another. And that is why your conclusions are inconclusive.

BTW, the 5870 consumes "less" power than the 275, 285 and 295 GPUs (at least, when playing WoW).

I understand that there may be higher wattage per square millimeter flowing through the 5870 than the GTX cards, but I don't see how that measurement alone is enough to state whether the 5870 actually gets hotter.

SiliconDoc - Saturday, September 26, 2009 - link

Take a look at SIZE my friend.http://www.hardforum.com/showthread.php?t=1325165">http://www.hardforum.com/showthread.php?t=1325165

There's just no getting around the fact that the more joules of heat in any time period (wattage used!= amount of joules over time!) that go into a smaller area, the hotter it gets, faster !

Nothing changes this, no red rooster imagination will ever change it.

SiliconDoc - Saturday, September 26, 2009 - link

NO, WRONG." Ultimately, the true measure of how much waste heat a card generates will have to look at the power draw of the card, tempered with the output work that it's doing (aka FPS in whatever benchmark you're looking at)."

NO, WRONG.

---

Look at any of the cards power draw in idle or load. They heat up no matter how much "work" you claim they do, by looking at any framerate, because they don't draw the power unless they USE THE POWER. That's the law that includes what useage of electricity MEANS for the law of thermodynamics, or for E=MC2.

DUHHHHH.

---

If you're so bent on making idiotic calculations and applying them to the wrong ideas and conclusions, why don't you take core die size and divide by watts (the watts the companies issue or take it from the load charts), like you should ?

I know why. We all know why.

---

The same thing is beyond absolutely apparent in CPU's, their TDP, their die size, and their heat envelope, including their nm design size.

DUHHH. It's like talking to a red fanboy who cannot face reality, once again.