NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

ECC Support

AMD's Radeon HD 5870 can detect errors on the memory bus, but it can't correct them. The register file, L1 cache, L2 cache and DRAM all have full ECC support in Fermi. This is one of those Tesla-specific features.

Many Tesla customers won't even talk to NVIDIA about moving their algorithms to GPUs unless NVIDIA can deliver ECC support. The scale of their installations is so large that ECC is absolutely necessary (or at least perceived to be).

Unified 64-bit Memory Addressing

In previous architectures there was a different load instruction depending on the type of memory: local (per thread), shared (per group of threads) or global (per kernel). This created issues with pointers and generally made a mess that programmers had to clean up.

Fermi unifies the address space so that there's only one instruction and the address of the memory is what determines where it's stored. The lowest bits are for local memory, the next set is for shared and then the remainder of the address space is global.

The unified address space is apparently necessary to enable C++ support for NVIDIA GPUs, which Fermi is designed to do.

The other big change to memory addressability is in the size of the address space. G80 and GT200 had a 32-bit address space, but next year NVIDIA expects to see Tesla boards with over 4GB of GDDR5 on board. Fermi now supports 64-bit addresses but the chip can physically address 40-bits of memory, or 1TB. That should be enough for now.

Both the unified address space and 64-bit addressing are almost exclusively for the compute space at this point. Consumer graphics cards won't need more than 4GB of memory for at least another couple of years. These changes were painful for NVIDIA to implement, and ultimately contributed to Fermi's delay, but necessary in NVIDIA's eyes.

New ISA Changes Enable DX11, OpenCL and C++, Visual Studio Support

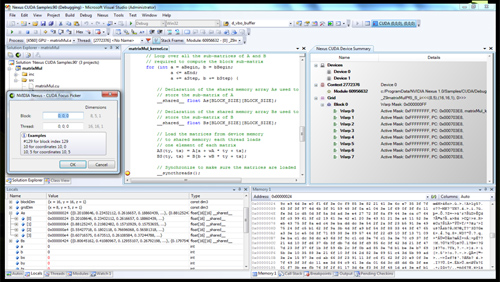

Now this is cool. NVIDIA is announcing Nexus (no, not the thing from Star Trek Generations) a visual studio plugin that enables hardware debugging for CUDA code in visual studio. You can treat the GPU like a CPU, step into functions, look at the state of the GPU all in visual studio with Nexus. This is a huge step forward for CUDA developers.

Nexus running in Visual Studio on a CUDA GPU

Simply enabling DX11 support is a big enough change for a GPU - AMD had to go through that with RV870. Fermi implements a wide set of changes to its ISA, primarily designed at enabling C++ support. Virtual functions, new/delete, try/catch are all parts of C++ and enabled on Fermi.

415 Comments

View All Comments

SiliconDoc - Wednesday, September 30, 2009 - link

No they did not post earnings, other than in the sense IN THE RED LOSSES called sales.shotage - Wednesday, September 30, 2009 - link

I'm not sure what your argument is SiliconDuck..But maybe you should stop typing and go into hybernation to await the GT300's holy ascension from heaven! FYI: It's unhealthy to have shrines dedicated to silicon dude. Get off the GPU cr@ck!!!

On a more serious note: Nvidia are good, ATI has gotten a lot better though.

I just bought a GTX260 recently, so I'm in no hurry to buy at the moment. I'll be eagerly awaiting to see what happens when Nvidia actually have the product launch and not just some lame paper/promo launch.

SiliconDoc - Wednesday, September 30, 2009 - link

My aregument is I've heard the EXACT SAME geekfoot whine before, twice in fact. Once for G80, once for GT200, and NOW, again....Here is what the guy said I responded to:

" Nvidia is painting itself into a corner in terms of engineering and direction. As a graphical engine, ATI's architecture is both smaller, cheaper to manufacture and scales better simply by combining chips or expanding # of units as mfg tech improves.. As a compute engine, Intel's Larabee will have unmatched parallel thread processing horsepower. What is Nvidia thinking trying to pass on this huge, monolithic albatross? It will lose on both fronts. "

---

MY ARGUMENT IS : A red raging rooster who just got their last two nvidia destruction calls WRONG for G80 and GT200 (the giant brute force non-profit expensive blah blah blah), are likely to the tune of 100% - TO BE GETTING THIS CRYING SPASM WRONG AS WELL.

---

When there is clear evidence Nvidia has been a markleting genius (it's called REBRANDING by the bashing red rooster crybabies) and has a billion bucks to burn a year on R&D, the argument HAS ALREADY BEEN MADE FOR ME.

-----

The person you should be questioning is the opinionated raging nvidia disser, who by all standards jives out an arrogant WHACK JOB on nvidia, declaring DUAL defeat...

QUOTETH ! "What is Nvidia thinking trying to pass on this huge, monolithic albatross? It will lose on both fronts. "

---

LOL that huge monolithic albatross COMMANDS $475,000.00 for 4 of them in some TESLA server for the collegiate geeks and freaks all over the world- I don't suppose there is " loss on that front" do you ?

ROFLMAO

Who are you questioning and WHY ? Why aren't you seeing clearly ? Did the reds already brainwash you ? Have the last two gigantic expensive cores "destroyed nvidia" as they predicted?

--

In closing "GET A CLUE".

shotage - Wednesday, September 30, 2009 - link

Found my clue.. I hope you get help in time: http://www.physorg.com/news171819640.html">http://www.physorg.com/news171819640.htmlSiliconDoc - Thursday, October 1, 2009 - link

You are your clue, and here is your buddy, your duplicate:" What is Nvidia thinking trying to pass on this huge, monolithic albatross? It will lose on both fronts."

Now, I quite understand denial is a favorite pasttime of losers, and you've effectively joined the red club. Let me convert for you.

" What is Ati thinking trying to pass on this over length, heat soaked, barely better afterthought? It will lose on it's only front."

-there you are schmucko, a fine example of real misbehavior you pass-

AaronJD - Wednesday, September 30, 2009 - link

While I definitely prefer the $200-$300 space that ATI released 48xx at, It seems like $400 is the magic number for single GPUs. Anything much higher than that is in multi-GPU space where you can get away with a higher price to performance ratio.If Nvidia can hit the market with well engineered $400 or so card that is easily pared down, then they can hit a market ATI would have trouble scaling to while being able to easily re-badge gimped silicon to meet whatever market segment they can best compete in with whatever quality yield they get.

Regarding Larabee, I think Nvidia's strategy is to just get in the door first. To compete against Intel's first offering they don't need to do something special, they just need to get the right feature set out there. If they can get developers writing for their hardware asap Tesla will have done its job.

Zingam - Thursday, October 1, 2009 - link

Until that thing from NVIDIA comes out AMD has time to work on a response and if they are not lazy or stupid they'll have a match for it.So in any way I believe that things are going to get more interesting than ever in the next 3 years!!!

:D ?an't wait to hear what DirectX 12 will be like!!!

My guess is that in 5 years we will have a truly new CPUs - that would do what GPUs + CPUs are doing together today.

Perhaps will come to the point where we'll get blade like home PCs. If you want more power you just shove in another board. Perhaps PC architecture will change completely once software gets ready for SMP.

chizow - Wednesday, September 30, 2009 - link

Nvidia is also launching Nexus at their GDC this week, a plug-in for Visual Studio that will basically integrate all of these various API under an industry standard IDE. That's the launching point imo for cGPU, Tesla and everything else Nvidia is hoping to accompolish outside of the 3D Gaming space with Fermi.Making their hardware more accessible to create those next killer apps is what's been missing in the past with GPGPU and CUDA. Now it'll all be cGPU and transparent in your workflow within Visual Studio.

As for the news of Fermi as a gaming GPU, very excited on that front, but not all that surprised really. Nvidia was due for another home run and it looks like Fermi might just clear the ball park completely. Tough times ahead for AMD, but at least they'll be able to enjoy the 5850/5870 success for a few months.

ilkhan - Wednesday, September 30, 2009 - link

If it plays games faster/prettier at the same or better price, who cares what the architecture looks like?On a similar note, if the die looks like that first image (which is likely) chopping it to smaller price points looks incredibly easy.

papapapapapapapababy - Wednesday, September 30, 2009 - link

"Architecturally, there aren't huge lessons to be learned from RV770"SNIF SNIF BS!

"ATI's approach is much more cautious"

more like "ATI's approach is much more FOCUSED"

( eyes on the ball people)

"While Fermi will play games, it is designed to be a general purpose compute machine."

nvidia, is starting to sound like Sony " the ps3 is not a console its a supercomputer @ HD movie player, it only does everything" guess what? people wanted to play games, nintendo ( the focused company, did that > games, not movies, not hd graphics, games, motion control) Sony - like nvidia here- didn't have the eyes on the ball.