NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

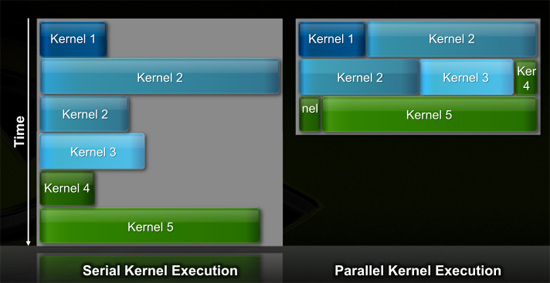

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

Griswold - Wednesday, September 30, 2009 - link

Well, you have to consider that nvidia is getting between a rock and a hard place. The PC gaming market is shrinking. Theres not much point in making desktop chipsets anymore... they have to shift focus (and I'm sure they will focus) on new things like GPGPU. I wont be surprised if GT300 wont be a the super awesome gamer GPU of choice so many people expect it to be. And perhaps, the one after GT300 will be even less impressive for gaming, regardless of what they just said about making humongous chips for the high-end segment.SiliconDoc - Wednesday, September 30, 2009 - link

Gee nvidia is between a rock and a hard place, since they have an OUT, and ATI DOES NOT.lol

That was a GREAT JOB focusing on the wrong player who is between a rock and a hard place, and that player would be RED ROOSTER ATI !

--

no chipsets

no chance at TESLA sales in the billions to coleges and government and schools and research centers all ove the world....

--

buh bye ATI ! < what you should have actually "speculated"

...

But then, we know who you are and what you're about -

TELLING THE EXACT OPPSITE OF THE TRUTH, ALL FOR YOUR RED GOD, ATI !

--

silverblue - Thursday, October 1, 2009 - link

When nVidia actually sends out Fermi samples for previews/reviews, only then will you know how good it is. We all want to see it because we want competition and lower prices (and maybe some of us will buy one or more, as well!).Until then, keep your fanboy comments to yourself.

SiliconDoc - Thursday, October 1, 2009 - link

No silverblue, that is in fact your problem, not mine, as you won't know anything, till you're shown a lie or otherwise, and it's shoved into your tiny processor for your personal acceptance.The fact remains, red fanboy raver Griswold blew it, and I pointed out exactly WHY.

The fact that you cry about it, because you group stupid dummies keep blowing nearly every statement you make, sure isn't my fault.

silverblue - Thursday, October 1, 2009 - link

I wonder if you do actually read posts before you reply to them.SiliconDoc - Thursday, October 1, 2009 - link

Take your own advice, you pathetic hypocrit.ClownPuncher - Thursday, October 1, 2009 - link

Its actually "hypocrite".SiliconDoc - Friday, October 2, 2009 - link

It's "it's", you pathetic hypocrit.silverblue - Friday, October 2, 2009 - link

It's "hypocrite", you pathetic hypocrite.chizow - Wednesday, September 30, 2009 - link

Nvidia is simply hedging their bets and expanding their horizons. They've still managed to offer the fastest GPUs per product cycle/generation and they're clearly far more advanced than AMD when it comes to GPGPU in both theory and practice.Jensen's keynote tipped his hat numerous times to Nvidia's roots as a GPU company that designed chips to run 3D video games, but the focus of his presentation was clearly to sell it as more than that, as a cGPU capable of incredible computational ability.