NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

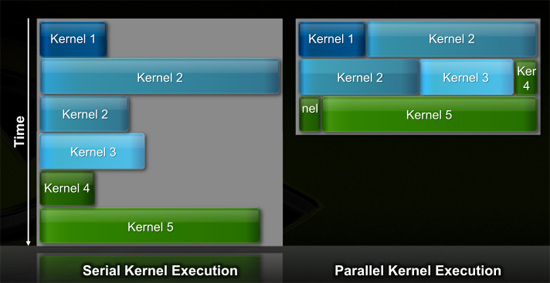

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

Zingam - Thursday, October 1, 2009 - link

No no! This is just on paper! When will see it for real!! Oh... Q2-3-4 next year! :)So you cannot claim they have the better thing because they don't have it yet! And don't forget next year we might have the head-smashing Larrabee!

:)

Who knows!!! I think you are way to biased and not objective when you type!

chizow - Thursday, October 1, 2009 - link

Heheh if Q2 is what you want to believe when you cry yourself to sleep every night, so be it. ;)Seriously though, its looking like late Q4 or early Q1 and its undoubtedly meant for one single purpose: to destroy the world of ATI GPUs.

As for Larrabee lol...check out some of the IDF news about it. Even Anand hints at Laughabee's failure in his article here. It may compete as a GPGPU extension of x86, but not as a traditional 3D raster, not even close.

SiliconDoc - Thursday, October 1, 2009 - link

Gosh you'd be correct except here is the FERMIhttp://www.fudzilla.com/content/view/15762/1/">http://www.fudzilla.com/content/view/15762/1/

There it is bubba. you blew your yap wide open in ignorance and LOST.

Good job, you've got plenty of company.

ClownPuncher - Thursday, October 1, 2009 - link

Wow, a video card! On top of that pcb could be a cat shit for all we know. The card does not exist, because I can't touch it, I can't buy it, and I can't play games on it.Also, the fact that you seem to get all of your info from Fudzilla speaks volumes. All of your syphillus induced mad ramblings are tiresome.

Lifted - Thursday, October 1, 2009 - link

I see what appears to be a PCB with some plastic attached, and possibly a fan in there as well. Yawn.ksherman - Wednesday, September 30, 2009 - link

Really like these kind of leaps in computing power, I find it fascinating. A shame that it seems nVidia is pulling a bit away from the mainstream graphics segment, but I suppose that means that the new cards from ATI/AMD are the undisputed choice for a graphics card in the next few months. 5850 it is!fri2219 - Wednesday, September 30, 2009 - link

For the love of Strunk and White, stop murdering English in that manner- it detracts from the text buried between banner ads.Sunday Ironfoot - Wednesday, September 30, 2009 - link

nVidia have invented a new way to fry eggs, just crack one open on top of their GPU and play some Crysis. :-)SiliconDoc - Wednesday, September 30, 2009 - link

Let's crack it on page 4. A mjore efficient architecture max threads in flight. Although the DOWNSIDE is sure to be mentioned FIRST as in "not as many as GT200", and the differences mentioned later, the hidden conclusion with the dissing included is apparent.Let's draw it OUT.

---

What should have been said 1st:

Nvidia's new core is 4 times more efficient with threads in flight, so it reduces the number of those from 30,720 to 24,576, maintaining an impressive INCREASE.

---

Yes, now the simple calculation:

GT200 30720x2 = 61,440 GT300 24576x4 = 98,304

at the bottom we find second to last line the TRUTH, before the SLAM on the gt200 ends the page:

" After two clocks, the dispatchers are free to send another pair of half-warps out again. As I mentioned before, in GT200/G80 the entire SM was tied up for a full 8 cycles after an SFU issue."

4 to 1, 4 times better, 1/4th the clock cycles needed

" The flexibility is nice, or rather, the inflexibility of GT200/G80 was horrible for efficiency and Fermi fixes that. "

LOL

With a 4x increase in this core design area, first we're told GT200 "had more" then were told Fermi is faster in terms that allow > the final tale, GT200 sucks.

--

I just LOVE IT, I bet nvidia does as well.

tamalero - Thursday, October 1, 2009 - link

on paper everything looks amazing, just like the R600 did in its time, and the Nvidia FX series as well. so please, just shut up and start spreading your FUD until theres real information, real benches, real useful stuff.