NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

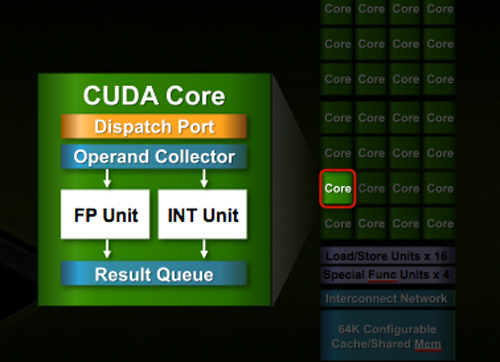

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

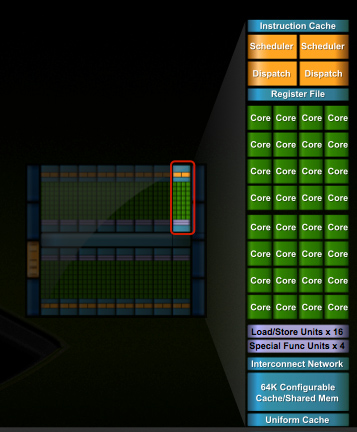

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

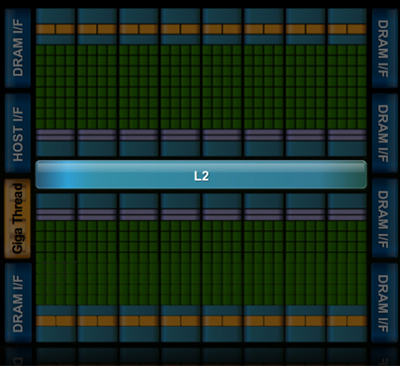

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

siyabongazulu - Friday, October 2, 2009 - link

rennyathe one I was going to buy on the 24 was from ncix.com but shipping and handling was gonna be a bit too much. The fact that they had the cards on the launch date, 23 goes to show how a big liar silicondoc is. Plus his link on fudzilla, just like I said before, betrays him since it does not say that the model being shown is a working model. I can say I'm making a remote that can trump all other remotes on performance levels and will be the next big thing for gadget lovers. But if I come up with a supposed model and just flash it around, it doesnt mean that it's a working model unless I can prove that it is by using it. So until Nvidia shows the card at work and gives us numbers based on such task then we can talk about it. Hey don't forget that we also go for bang for buck, reason why I got the 5870. I have a feeling that when GT300 does launch, it will be big but also would like to see how ATI responds to that.

siyabongazulu - Friday, October 2, 2009 - link

rennyaI think we all get it. This guy is mentally ill. So I posted those links to refute his argument and he hasn't touched any. He knows he's lying and that his fudzilla link betrays him. So he'll keep on ranting. silicondoc has to remember this, all big companies that have a working model of an upcoming product do a demo and show the figures and they don't just give you paper work (which is what Nvidia is doing right now.) And it doesn't take a genius to see that GT300 w/e they wanna call it is not even close to be released unless you would want to call many sources such as tgdaily, the inquirer (http://www.theinquirer.net/inquirer/news/1052025/g...">http://www.theinquirer.net/inquirer/news/1052025/g... and ofcourse anand big liers then go ahead silicondoc..So until it comes out ( be it Nov, Mid Oct or Jan) then we can come back and talk performance. Have fun silicondoc and when GT300 performance trumps HD5870 then I'll sell 5870 for Gt300 card but don't forget we also need to see how much power that monster will be drawing. I call it monster because so far the on "paper" performance puts it that way

siyabongazulu - Friday, October 2, 2009 - link

by the way sorry I forgot to pass you the link where I got mine.. here you go http://www.canadacomputers.com/index.php?do=ShowPr...">http://www.canadacomputers.com/index.ph...=pd&... and here is another site they have had them in stock since the 23rd of sept just like predicted. Man you are full of shit..was gonna buy mine from there but decided to save the money on shipping and boom 3 days later it was in a store right next door.wwohoo..I just said bye bye to my nvidia card and if you wanna know it was yes you guessed it GTX 285 and glad I got almost full value for that!!siyabongazulu - Friday, October 2, 2009 - link

Here SD..And that's where I got mine you dumb prick.. now stop fussin and if you ask when I got it, well so just you know it was on the 29th you idiot and that is simply because I'm in Canada. So if Nvidia had it launched, wouldn't it be somewhere on the web now. by the way here is an article that refutes your claim that Radeon HD 5870 was a fud (http://www.engadget.com/2009/09/24/4-000-alienware...">http://www.engadget.com/2009/09/24/4-00...-benchma... Dell had it already. Here is another (http://www.engadget.com/2009/09/23/maingear-cyberp...">http://www.engadget.com/2009/09/23/main...-desktop.... And all of those pc makers had the cards prior to launch date. So I don't know how you can argue that. So if you Nvidia was to have its card as you claim by using fudzilla pictures, then please provide us with a link that shows any manufacture that is already selling a pc with your GT300 and here is another link that shows how dumb you are http://www.engadget.com/2009/09/23/ati-radeon-hd-5...">http://www.engadget.com/2009/09/23/ati-...o-the-sc... hope that helpsTA152H - Wednesday, September 30, 2009 - link

I agree, it shows sooooo much intelligence and class to curse! It's so creative! It's so wonderful! It's a real shame we don't have more foul mouths in positions of power, because it's just so entertaining!Yay!

yacoub - Wednesday, September 30, 2009 - link

I'm with you. Oh wait, don't forget the bold to make sure no one misses it!TA152H - Thursday, October 1, 2009 - link

Admin: You've overstayed your welcome, goodbye.I am beginning to think this site is lost. One thing I always liked about Anand was his tone was never harsh, even if I didn't agree with the content.

Now he's cursing. Yes, and as you said, in bold. Ugggh.

This and their idiotic pictorials of motherboards, then the clear bias towards Lynnfield. Again, I'm not complaining they liked it, so much as the way they lied about the numbers, and it took a lot of complaints to show the Bloomfied was faster. They were trying to hide that.

Their unscientific testing is also gotten to the point of absurdity.

I like their web page layout, but it's getting to the point where this site is become much less useful for information.

It's easy to get to the point where you do what people say they want. Yes, the jerks like to see curses, and think it's cool. I'm sure they got page reads from the idiotic pictorials of motherboards. Most of the people here did want to be lied to about Lynnfield, since it was something more people could afford compared to Bloomfield. It's tempting, but, ultimately, it's a mistake.

I'd like to see them do something that requires intelligence, and a bit more daring. Pit one writer (not Gary, he's too easy to beat) against another. Have, point and counterpoint articles. Let's say Anand is pro-Lynnfield, or pro-ATI card, or whatever. Then they use Jarrod to argue the points against it, or for the other card. Now, maybe Anand isn't so pro this item, or Jarrod isn't against it. Nonetheless, each could argue (anyone can argue and make points, because nothing in this world is absolutely good or bad, except for maybe pizza), and in doing so bring up the complexity of parts, rather than making people post about the mistakes they make and then have them show the complexity.

You think Anand would have put up those overblown remarks in his initial article on Lynnfield if he knew Jarrod would jump on him for it? I'd be more careful if I were writing it, so would he. I think the back and forth would be fun for them, and at the same time, would make them think and bring out things their articles never even approach.

It's better than us having to post about their inaccuracies and flaws in testing. It would be more entertaining too. And, people can argue, without disliking each other. Argument is healthy, and is a sign of active minds. Blind obedience is best relegated to dogs, or women :P. OK, I'm glad my other half doesn't read these things, or I'd get slapped.

ClownPuncher - Thursday, October 1, 2009 - link

Don't let the door hit you in the ego on the way out.the zorro - Wednesday, September 30, 2009 - link

this is a catastrophe.really, nvidia is finished.

this means four or five months of amd ruling.

seriously nvidia has nothing, nothing,zero, nada,

kaput nvidia is over.

Lifted - Wednesday, September 30, 2009 - link

Huh? Is that really all the troll you could muster for this article? SiliconDoc has you beat by a mile.