NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

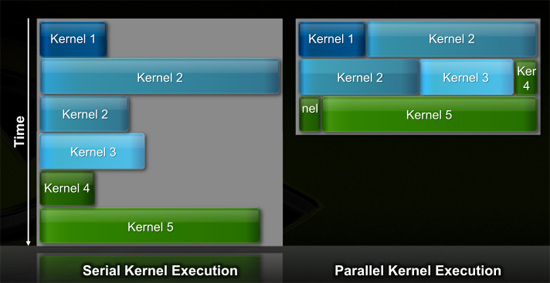

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

SiliconDoc - Wednesday, September 30, 2009 - link

I'm sure Anand brought it out of him with his bias.Already on page one, we see the UNFAIR comparison to RV870, and after wailing Fermi "not double the bandwidth" - we get ZERO comparison, because of course, ATI loses BADLY.

Let me help:

NVIDIA : 240 G bandwidth

ati : 153 G bandwidth

------------------------nvidia

---------------ati

There's the bandwidth comparison, that the biased author couldn't bring himself to state. When ati LOSES, the red fans ALWAYS make NO CROSS COMPANY comparison.

Instead it's "nvidia relates to it's former core as ati relates to it's former core - so then "amount of improvement" "within in each company" can be said to "be similar" while the ACTUAL STAT is "OMITTED !

---

Congratulations once again for the immediate massive bias. Just wonderful.

omitted bandwith chart below, the secret knowledge the article cannot state ! LOL a review and it cannot state the BANDWITH of NVIDIA's new card! roflmao !

------------------------nvidia

---------------ati

NVIDIA WINS BY A VERY LARGE PERCENTAGE.

konjiki7 - Friday, October 2, 2009 - link

http://www.hardocp.com/news/2009/10/02/nvidia_fake...">http://www.hardocp.com/news/2009/10/02/..._fakes_f...

Samus - Thursday, October 1, 2009 - link

Thats great and all nVidia has more available bandwidth but....they're not anywhere close to using it (much like ATi) so exactly what is your point?SiliconDoc - Friday, October 2, 2009 - link

Wow, another doofus. Overclock the 5870's memory only, and watch your framerates rise. Overclocking the memory increases the bandwith, hence the use of it. If frames don't rise, it's not using it, doesn't need it, and extra is present.THAT DOESN'T HAPPEN for 5870.

-

Now, since FERMI has 40% more T in core, and an enourmous amount of astounding optimizations, you declare it won't use the bandwith, but your excuse was your falsehood about ati not using it's bandwith, which is 100% incorrect.

Let's pretend you meant GT200, same deal there, higher mem oc= more band and frames rise well.

Better luck next time, since you were 100% wrong.

mm2587 - Thursday, October 1, 2009 - link

you do realize the entire point of mentioning bandwidth was to show that both Nvidia and AMD feel that they are not currently bandwidth limited. They have each doubled their number of cores but only increased bandwidth by ~%50. Theres no mention of overall bandwidth because thats not the point that was being made. Just an off hand observation that says "hey looks like everyone feels memory bandwidth wasn't the limitation last time around"Zingam - Thursday, October 1, 2009 - link

ATI has it here and has it now! NVIDIA does not win because on paper I have 50 billion transistors GPU on 1 nm process! I win! ;)You are a retarded fanboy! And I am not. I'd buy what's best for my money.

SiliconDoc - Thursday, October 1, 2009 - link

Behold the FERMI GPU unbeliever !http://www.fudzilla.com/content/view/15762/1/">http://www.fudzilla.com/content/view/15762/1/

That's called, COMPLETED CARD, RUNNING SILICON.

Better luck next time incorrect ignorant whining looner.

siyabongazulu - Friday, October 2, 2009 - link

Do you see any captions on that site? I don't think so. Nowhere does it mention that it's a complete card. So please stop lying because that goes to show how ignorant you are. Any person with a sound mind can and will tell you that it's not a finished product. So come up with something more valid to show and rant about. Sorry that your big daddy Heung hasn't given you your green slime if you like it that way. Just wait on the corner and when he says, GT300 is a go and tests confirm that it trumps 5870 then you can stop crying and suck on that.silverblue - Thursday, October 1, 2009 - link

When's it coming out?I mean, you have all the answers.

SiliconDoc - Thursday, October 1, 2009 - link

Well thanks for the vote of confidence, but yesterday on the launch, according to the author, right ?LOL

Ha, golly, what a pile.